It’s not that often that you meet experienced marketers who are nice people and also good at their jobs at the same time.

Dave Davies is an SEO veteran we featured in our 25 Technical SEO Experts on Twitter roundup who has been in the industry for longer than almost anyone. Davies has been writing about SEO topics as a contributor to Search Engine Journal and Search Engine Watch for over a decade. He is the founder of Beanstalk Marketing and is currently the Lead SEO at Weights & Biases.

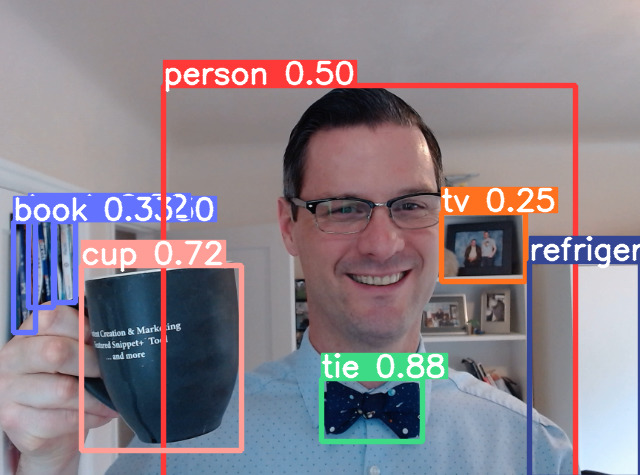

Apart from being a skilled SEO professional, Davies is also knowledgeable about web development and machine learning topics. As such, Davies has a more intimate understanding of the relationship between internet users and search engines better than nearly anyone else in the field today.

Davies isn’t just an SEO expert with technical chops either – he loves sharing his knowledge and using his experience to make the industry better for everyone. That coupled with his affable personality and sense of humor make him widely respected in the SEO world.

We sat down with Davies to talk about technical elements, the relationship between Google and smaller brands, and what he thinks the next core algorithm update might have in store. Here’s what he said.

I. Google’s official stance is that Googlebot can crawl and index Javascript without any issues. The available studies out there show that although technically true, it takes them longer and uses more resources – meaning Javascript SPAs exhaust their crawl budget quickly.

You’ve been in the SEO industry longer than almost anyone. What is your opinion on this?

They are wrong.

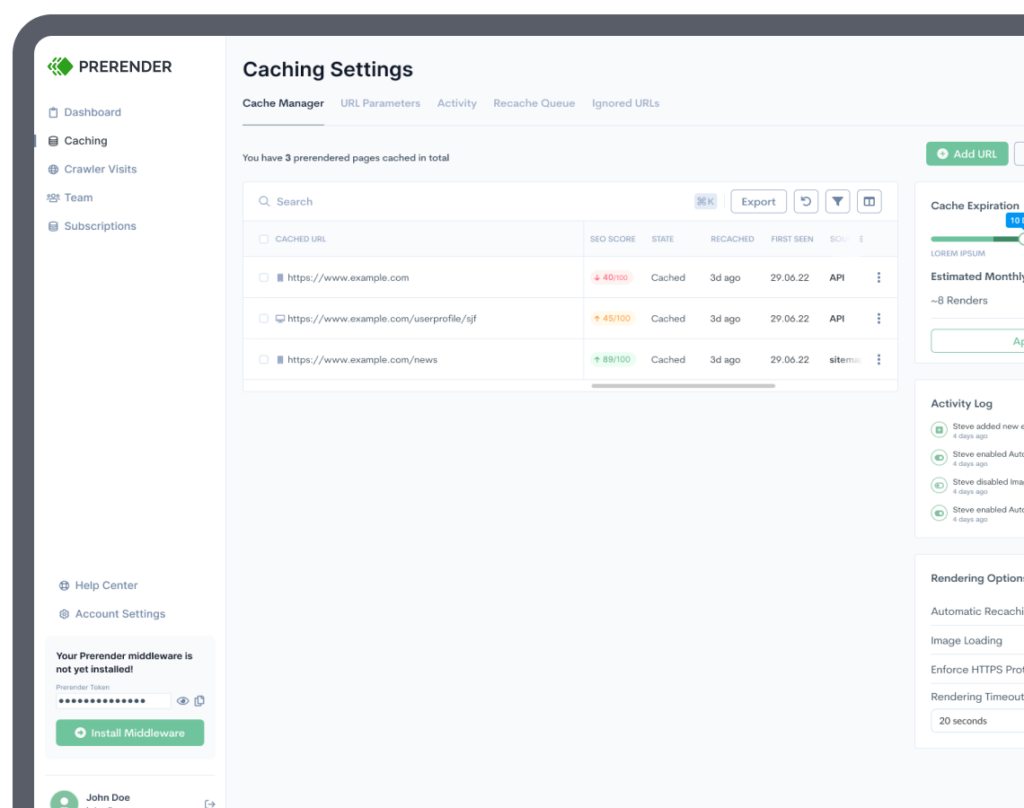

I am right now working for a company that has a SPA website that uses prerendering and let me tell you, whenever the slightest thing goes wrong I see it very clearly in the rankings and the caches.

I noticed just a couple of months ago a hiccup at Google with prerendering, which was followed pretty closely with a lag in the coverage reports and a form to submit indexing bugs.

In short, I do think they’re working on it – but they’re still a ways off from this being true, and I’m not sure that the solution will ever be crawling.

II. In recent years it’s become harder and harder for small businesses and startups to get visibility on Google SERPs because of algorithm changes that favor established brands that already have an audience and a web presence.

What can Google do to better support smaller businesses and startups and be their advocates?

While I understand the context of the question and sentiment, when I really think about it I’m not sure that’s true.

Yes, when we’re fighting battles with national brands on their turf (national SERPs) it does often come to this, but Google is giving local businesses a lot of new tools and visibility options. The national brands can play there, as applicable – but it’s a lot harder for them to stand out and they don’t seem as favored by traditional metrics.

So if small businesses focus on local markets, which many do, they have serious advantages if they know how to take them. For smaller businesses tackling national markets against sites like Amazon and Walmart, it is true they’ll be fighting an uphill battle.

They need to find a sub-niche to start, where keywords are easier and start there. In that context, not a lot has changed over the years.

III. Many SEO professionals make the mistake of making the Google gods happy at the expense of user experience.

This is a fundamentally flawed approach because Google’s mission statement focuses on the user – to provide the user with the best possible result for a given query.

How do we solve for the user instead? How do we make that user-first mentality the conventional wisdom in SEO?

I have a very short answer to this question because I think we often make it more difficult than it has to be.

Create the content the user wants. Deliver it in the format they want it in. And make sure Google understands that you’ve done that.

To expand a twitch:

Create the content the user wants – Think about the user as the person entering the query, not your customer. Think about all the things a person entering that query might be looking for and deliver as many as you can while keeping the content clean. With that, you maximize the probability that you will satisfy a user, and that’s what Google wants you to do.

Deliver it in the format they want it in – If they want a video, give them a video. They all want it fast. They all want it secure. They all want to be able to access it on any device from any location. Give people what they want, and you’ll be ahead of the next rule Google throws at you.

And make sure Google understands that you’ve done that – Make sure you link between your pages logically, add schema where applicable, etc. You’ve done the work for the user, do a bit more to make sure Google understands it, and you’ll be well on your way.

IV. Even if we take Google’s word for it that their web crawler can crawl and render Javascript, there’s no guarantee that websites made using Javascript frameworks will be well-optimized for both users and search engines.

What is the single most important thing that webmasters and technical SEO experts can do to make sure their Javascript web applications are well-optimized for search?

Monitor. Monitor. Monitor.

Set up alerts on key pages to run daily and alert you to an unexpected drop.

Manually check pages not just with a crawler, but inspect the cache and inspect the code produced by testing your URL in Google Search Console – see how it renders. Check a variety of pages and page types. Just because one part of the page is fine, doesn’t necessarily mean it all is.

Beyond that, make sure you have a good dev and good technology.

V. You’ve been covering Google core algorithm updates on Search Engine Journal for years.

What do you anticipate the next core algorithm update to focus on, and why? What’s missing in the way Google ranks and categorizes web pages that isn’t there already?

This question really got me thinking.

I think as far as core updates go, the next series will likely focus on infrastructure and keeping an increasingly complex arrangement of pieces working together.

We’re watching MUM starting to get used in the wild, and we’ve heard about LamDA. We’ve read about KELM and the potential it has in creating a more reliable and “honest” picture of the world.

What we don’t read a lot about (mainly because it’s boring and we don’t want to) is the technology behind it. KELM would add verified facts to a picture of the world Google has created from a different system (MUM, for example). Great, but how do you get those two parts communicating and sharing information?

This is, to me, the biggest of their challenges and why I suspect it will be the focus on their core updates for the foreseeable future.

I’ve started reading some of the papers on some of the technologies behind the technologies we hear about.

How ByT5 can improve understanding content in a noisy environment (where noise may be something like misspelled words on social media, etc) by moving away from tokens and working byte-to-byte which required a lot to overcome the hurdle of ballooning the compute time.

Or how Google FLAN improves zero-shot NLP across domains (where domains are not sites, but rather tasks) so a system trained on classifying sentiment (for example) can be used to improve a translation model with little additional training required for the new task.

This, in my mind, is what the core updates need to deal with.

VI. Many web developers lack even a basic understanding of SEO. That creates problems down the line when SEO problems become ignored or buried under legacy code which makes them harder to diagnose and fix.

As an SEO veteran with web development credentials, what do you think we can do to bridge that gap?

How can web developers make sure that an SEO infrastructure is in place from the moment they begin development? On the other side, what can marketing teams do to make the developers’ jobs easier?What do you anticipate the next core algorithm update to focus on, and why? What’s missing in the way Google ranks and categorizes web pages that isn’t there already?

I honestly believe it’s a two-way street.

When I was cutting my teeth I used Dreamweaver 4 to put content into tables and upload them page-by-page. I learned a lot on my way, but the pace of change in dev and SEO meant I needed to choose a path and I was never a developer so I stuck with SEO.

Yes I can still throw together a decent WordPress site, and probably edit the themes without breaking anything, but I wouldn’t consider myself even an intermediate dev. And it’s great that I know that.

That history and ability though, I think makes me a bit better than some at understanding how to communicate with developers.

I can’t count the number of times I’ve outlined my needs and how to solve a problem to a capable developer, only to have it bite me in the butt when they followed by instructions to unexpected results.

Now I isolate what the problem is, describe and send screenshots of how I know and how I’ll know when it’s fixed to the developer, and while I might include a potential fix I found – I try to be clear that it is for illustrative purposes only.

9 times out of 10, if you are working with a good developer they’ll be able to think of solutions you never would and often solve additional problems you might not have known you had.

Respect them, respect their knowledge and they will respect yours.