When the company behind the world’s most widely used SEO plugin fundamentally changes what it’s optimizing for, that’s worth paying attention to. In this episode of Get Discovered, host Joe Walsh sat down with Alain Schlesser, Principal Architect at Yoast—the plugin powering over 13 million WordPress websites—to unpack what Yoast’s strategic pivot toward AI visibility tracking reveals about where SEO is heading.

Watch the full episode below or read on for key takeaways.

How AI Has Become a Gatekeeper Between Users and Search Engines

Schlesser begins the conversation by explaining that AI is a new layer we have to think about.

When a user types a question into Perplexity or ChatGPT, they’re not submitting a search query the way they used to. They’re asking a large language model a question. If that LLM determines the question requires grounding in real-world data, it then generates its own search queries and runs them against whatever search engine it’s connected to, whether that’s Google or a proprietary index, all of which is entirely behind the scenes.

“It’s not enough to appear in the search engines anymore,” Schlesser explained. “You also need to go through the filter that is the AI system and be relevant for the AI system.”

This has two major implications. First, brands now need to optimize for a layer they can’t directly observe or understand. Second, the queries themselves have changed: they’re not human-written keyword strings anymore. Instead, understanding what queries an LLM generates on a user’s behalf requires a fundamentally different kind of visibility.

Further reading: Industry Study: What 100M+ Pages Reveal About How AI Chooses Your Content

Why AI Search Fragmentation Has Made SEO Harder Than the Google Era

For years, SEO practitioners had an unusual luxury: a near-monopoly made their jobs simpler. Google was one primary search engine to optimize for with one set of rules and one analytics framework.

“While everyone cursed the fact that this was a monopoly, it actually made it very straightforward to optimize for,” Schlesser noted.

But things are different now, and unfortunately, that clarity is gone. Every AI answer engine now uses a different combination of search backends, and each is powered by an LLM trained on different data. A brand’s visibility can vary dramatically across ChatGPT, Perplexity, and Gemini. This isn’t because of anything the brand did differently, but how those underlying systems were built.

LLMs Don’t Scroll to Page Two: Why Being in the Top Five Rankings Means Everything

Another important detail that Schlesser discusses is the importance of being in the top-5 rankings. That’s because AI systems don’t paginate.

“When an LLM runs a search query to gather grounding data, it doesn’t scroll through results the way a human might. It takes the top three to five results… and nothing else,” says Schlesser. “Either you appear in the first few spots, or you don’t exist.”

This means the classic concept of “above the fold” on a SERP isn’t just about user click behavior anymore. Instead, it’s baked into how AI systems process information at a technical level. Results from page two and onwards are now invisible to LLMs.

Rather than diminishing the importance of traditional SEO, this actually raises the stakes. Technical SEO fundamentals like indexability, crawlability, site speed, and structured content matter more now. The threshold for existing in AI search has gotten higher, not lower.

The LLM Training Data Problem: Why Being “Average” Makes You Invisible

Beyond the search layer, there’s a deeper challenge in how LLMs represent brands in their training data. This has to do with statistical compression, says Schlesser.

When an LLM is trained, he explains, it doesn’t retain every data point it encounters. It compresses its understanding into a statistical model that preserves strong signals and discards noise. In practice, this means the model keeps outliers—the cheapest brand, the most premium brand, or the most sustainability-focused brand—and a handful of examples that represent a meaningful average. Everything else gets compressed away.

“If you just go with the defaults because it’s safe, it will actually make it very likely for you to never appear in the consciousness of those LLM systems,” Schlesser said. “Safe will not be enough.”

In traditional search, a strategy of being safe, consistent, and broadly present could accumulate enough volume to be effective. In an AI-driven world, that strategy actively works against you. Brands that don’t stand for something specific, such as original data or a clear, distinct angle or perspective, risk being statistically invisible in the training data that powers the systems where discovery increasingly happens.

The practical upshot: brands need a genuine point of view. Not just good content, but distinctive content that positions them at one end of a meaningful spectrum.

Should You Block AI Crawlers? Why That Strategy Is Likely to Backfire

Some companies have started blocking AI crawlers like GPTBot. It’s an understandable impulse. Bots consume bandwidth, raise server costs, and there are legitimate questions about how that data is being used.

But Schlesser is blunt about what blocking AI crawlers actually means for long-term discoverability: “It just means that you don’t exist.”

The analogy he draws is instructive: you wouldn’t have blocked Googlebot in 2005 just because it was new and unfamiliar. Blocking AI crawlers now is the same category of mistake. You’re opting yourself out of a discovery channel that will only grow in importance.

For brands concerned about server load and bandwidth costs, there are better approaches than blanket blocking. Yoast has been working on exactly this kind of infrastructure. They’ve added support for llms.txt—a lightweight file that enables quick, efficient content discovery by AI systems—and recently entered into a partnership with Microsoft around the NL Web Protocol, a new standard similar to sitemaps that lets AI systems get a structured overview of an entire website through a single efficient endpoint.

The goal isn’t to make your site accessible to every bot indiscriminately. It’s to be discoverable by the AI systems your future customers are using, while managing bandwidth intelligently.

Further reading: Peter Rota on why technical SEO is more important than ever.

Why Traffic Is the Wrong Thing to Optimize For

One of the more grounding moments in the conversation came when Schlesser talked about what actually happens when customers come to Yoast asking for help.

“Customers usually come with the wrong goals in mind,” he said. “Often it’s: I want to increase traffic to my website. Why? What is the business goal?”

More traffic, he pointed out, means higher server costs. That’s the only guaranteed result of more traffic. Unpacking the why behind the traffic goal, whether it’s more orders or more brand awareness, often reveals that raw traffic isn’t the right metric to optimize for at all.

This matters especially now, as AI reshapes the relationship between content and conversion. As more transactions move through automated systems and AI agents rather than human-driven browsing sessions, the question of whether a website is designed for human visitors becomes genuinely complicated. Schlesser’s advice: start measuring bot-driven transactions now, even if they’re a small fraction of your volume. You don’t want to miss the inflection point where automated traffic starts to exceed human traffic for your business.

Looking Ahead Over The Next 12–18 Months

Asked about the near-term outlook for businesses navigating AI-driven discovery, Schlesser didn’t sugarcoat it.

“A lot of things will get worse before they get better,” he said. “But it’s not something anyone can stop.”

His framing: imagine rising sea levels. Businesses that stay above the waterline now will be lifted as the tide rises. Businesses that wait too long to adapt will find it exponentially harder to recover.

For slower-moving organizations, the most urgent first step isn’t to build a comprehensive AI visibility strategy overnight. It’s to establish your own real-time metrics so you can see what’s actually happening in your specific industry or for your specific audience, rather than waiting for industry reports or analyst coverage that will always lag the reality on the ground.

“You want to have your own metrics that you’ve seen in real time, that you want to make decisions on,” Schlesser said. “Not wait for someone else to write a book on it.”

Key Takeaways

- AI is now a filter in front of search, not a replacement for it. Traditional SEO matters more than ever, but you need to optimize for the AI layer on top of it, not just the search index beneath it.

- LLMs only see the top 3–5 search results. There is no page two. Being outside the top results means not existing for AI-powered answer engines.

- Being safe with your content isn’t enough. Distinctive brands survive statistical compression; average brands don’t. Being safe and broadly present is no longer a viable strategy in an era where LLM training data compresses everything that isn’t a clear outlier.

- Blocking AI crawlers is a short-term decision with long-term costs. Better infrastructure solutions exist, such as llms.txt and the NL Web Protocol, that let you manage bot traffic intelligently without opting out of AI discovery entirely.

Don’t wait to start. Establish real-time AI visibility metrics now, rather than waiting for industry reports. Instrument your own data so you can see the inflection points in your business before they hit.

Tune Into the Full Conversation

Listen to the full episode of the Get Discovered podcast wherever you get your podcasts. Make sure to subscribe so you don’t miss future episodes with SEO experts and business leaders navigating the AI discovery challenge in real time. To connect with Alain, visit Yoast or find him on LinkedIn.

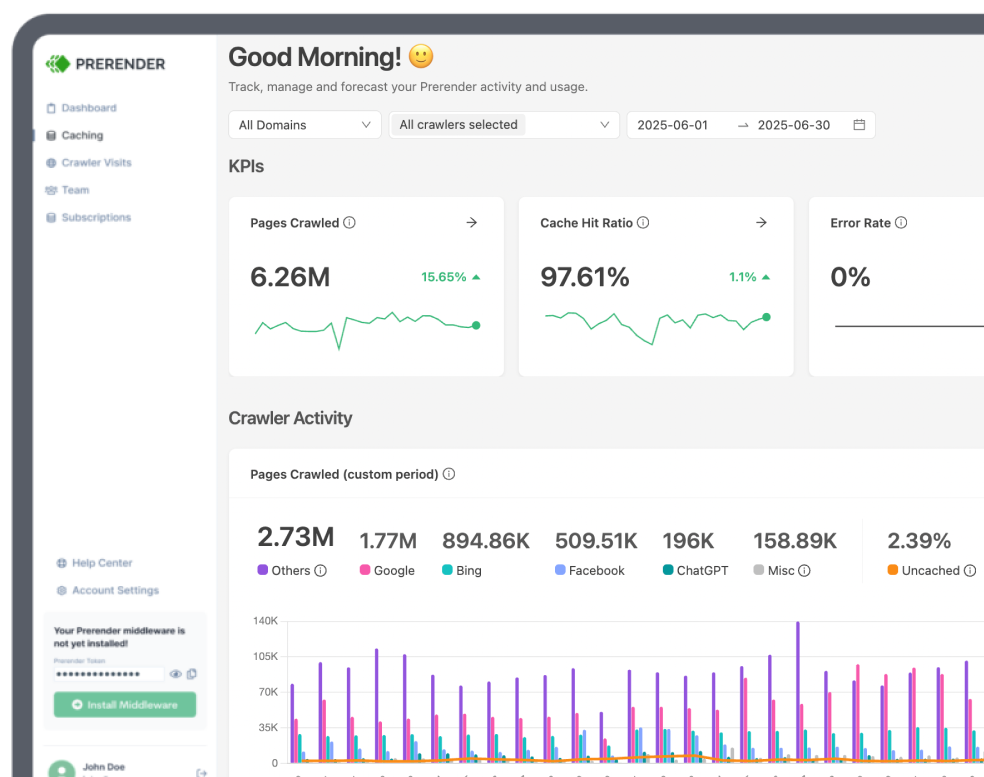

To make sure your site is visible to LLMs, try Prerender.io for free. We make sure your site is visible to ChatGPT, Perplexity, Claude, and every AI search platform your buyers are using.