Getting your product listings indexed to rank on SERPs requires crawling. To recap: before anything, bots must crawl your content and use your (already limited) crawl budget to first “understand” your content. This can be a complex process with standard sites, but with ecommerce, it’s even more complicated.

Due to the dynamic nature of content, ecommerce sites are more prone to depleted or poorly managed crawl budget management. Find out what crawl requests drain your budget, plus some tips for managing your crawl budget more efficiently.

💡 Want to know more about crawl budgets? Download the free technical SEO guide to crawl budget optimization and learn how to improve your site’s visibility on search engines.

Why Ecommerce Sites Should Care About Crawl Budget Optimization

Ecommerce websites are beasts. Hundreds of thousands of product pages constantly change with updates to availability, prices, and reviews, manipulating the ecommerce website structure. This generates a massive number of URLs and a surge in crawling JSON requests.

Here’s the problem: every (re-)crawl and (re-)index uses up your crawl budget. When hundreds of product listings need attention, your crawl budget quickly depletes. This leads to slow indexation (outdated content) and potentially missed content altogether.

Unfortunately, high product page volume isn’t the only factor that can easily deplete your ecommerce crawl budget. There are other “ecommerce requests” that are often overlooked but can highly influence your crawl rate performance.

What are Ecommerce Requests?

Ecommerce requests, in the context of crawl budget, refer to the various types of HTTP requests that are generated when crawlers (like Googlebot) interact with an ecommerce website.

Search engine crawlers don’t modify website data; they simply read and analyze it. So the most common ecommerce request that search crawlers make is GET requests, which are designed to retrieve information from a server.

These ecommerce requests, while crucial for your website’s functionality and user experience, can significantly deplete your crawl budget if you’re not proactive in managing your crawl budget.

3 Common Ecommerce Crawl Budget Drainers and How to Optimize Them

Below are three common ecommerce technical SEO challenges that can easily zero out your retail store crawl budget.

1. Duplicate Content

PROBLEM

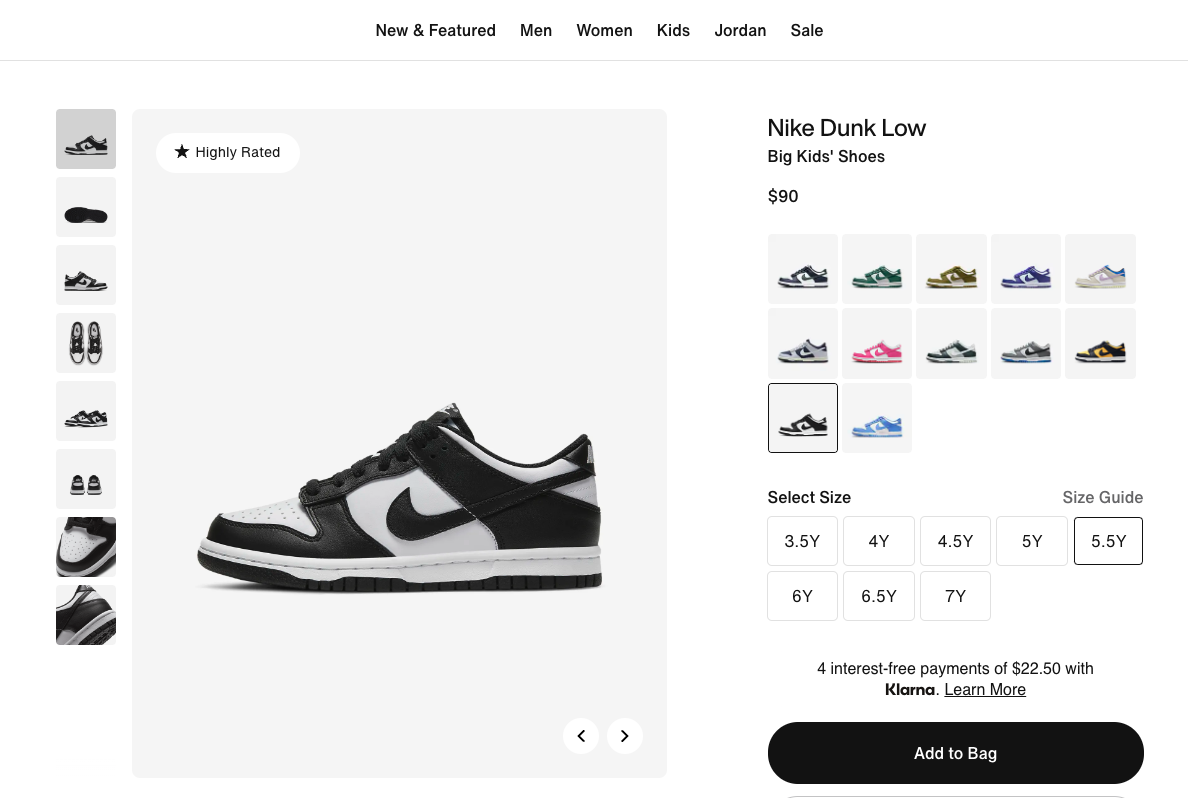

One of the biggest crawl budget killers for ecommerce sites is duplicate content. Think about product variations based on color, size, or other minor details. Each variation often gets its own unique URL, resulting in multiple pages with nearly identical content.

When search engines encounter multiple URLs leading to essentially the same content, it creates a dilemma. Which version should they index as the main page? How should they allocate their finite crawling resources? Consequently, Googlebot may spend your crawl limit indexing all variations, wasting your valuable crawl budget.

This problem is constantly occurring in retail sites for a few key reasons:

- Product Variations

A single product can have countless SKUs (Stock Keeping Units) with unique URLs due to size, color, material, etc. While these variations offer valuable options to customers, they create technically distinct pages presenting the same core content for search engine crawlers.

- Session IDs and Tracking Parameters

Dynamic URLs, such as session IDs, affiliate codes, and tracking parameters, often get appended to product and category URLs. This creates a massive number of seemingly identical URLs from a search engine’s perspective.

- Faceted Navigation

Modern ecommerce platforms offer robust filtering and faceting options to refine product searches. However, this functionality can present technical SEO problems as each filter combination applied to a category page generates a new URL, potentially leading to duplicate content.

All three factors above make your ecommerce site generate hundreds or even thousands of URLs that are essentially identical from a search engine’s perspective. This not only dilutes the link equity and authority of your most valuable product and category pages, but it also reduces ecommerce page speed and forces crawlers to waste precious time sifting through the redundant content.

SOLUTION

Deleting or merging duplicate content is the best to reclaim your crawl budget, and this may sometimes mean you need to learn how to guide Googlebot on crawling URLs. You can do that with the following steps:

Step 1: Identify Duplicate Content

Use website crawling tools like Screaming Frog or SEMrush Site Audit to identify duplicate pages. The free version of Screaming Frog might be sufficient for smaller websites with limited budgets, but if you need a more comprehensive SEO audit with advanced reporting and prioritization, SEMrush Site Audit could be a better choice.

Related: Not a fan of Screaming Frog? These 10 free and paid Screaming Frog alternatives may suit you better.

Step 2: Consolidate Duplicate Pages

Instead of separate URLs for product variations (color, size), consider consolidating them onto a single page with clear filtering options. This reduces the number of URLs competing for crawl budget and ensures unique content gets prioritized.

Step 3: Leverage “Noindex”

Use robots.txt to block out-of-stock product pages and unwanted pages from being crawled. This prevents Googlebot from wasting its crawl budget on irrelevant content. Visit our guide for a detailed instruction on how to apply robots.txt directive to your website.

2. Infinite Scrolling

PROBLEM

Infinite scrolling creates a seamless user experience by loading more products as the user scrolls down a page. While great for user engagement, it presents a challenge for search engine crawlers.

Search engines primarily rely on the initial content loaded on a webpage for indexing. They send a GET request for this content, which includes product information, descriptions, and category listings.

With infinite scrolling, additional product information and listings are loaded dynamically as the user scrolls down. Since Googlebot prioritizes what it sees on the first-page load, important products buried deeper within the infinite scroll are entirely missed.

This translates to missing content, as products loaded further down the infinite scroll become invisible to search engines. The overall picture of your offerings presented to search engines also becomes incomplete, leading to inaccurate search results and hindering your organic reach.

SOLUTION

There are a couple of ways to fix this.

- Implement “Load More” Functionality

Instead of infinite scrolling, use a “Load More” button that dynamically loads additional products without generating new URLs.

- Use Canonical Tags

Ensure that the main category page URL is set as canonical for all paginated URLs, consolidating link equity and crawl budget.

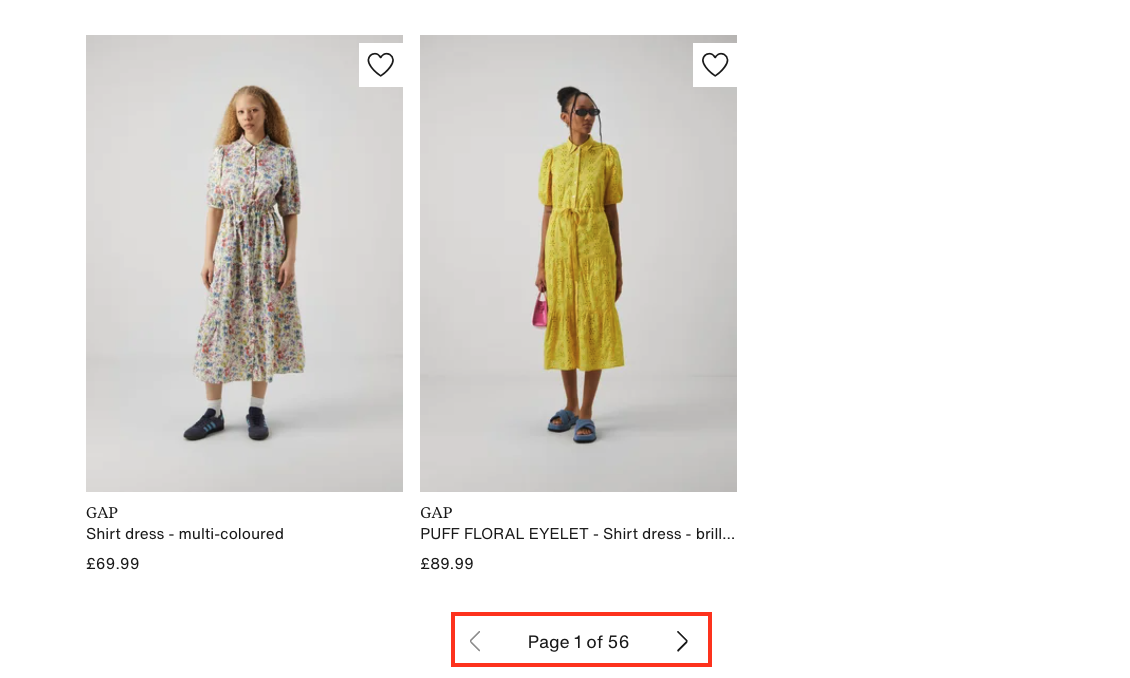

- Pagination

While not as user-friendly as infinite scrolling, pagination allows Googlebot to see all available product listings on separate pages, guaranteeing complete indexing.

However, to save the crawl budget, consider setting a sensible limit on the number of paginated URLs generated, such as only crawling the first 10-20 pages. Also, use robots.txt or meta robots tags to prevent search engines from crawling deeper paginated URLs.

3. Unoptimized Ecommerce JavaScript Content

PROBLEM

Another major factor depleting ecommerce crawl budgets is JavaScript-generated content.

Modern ecommerce sites rely heavily on JavaScript frameworks like React, Angular, and Vue for dynamic content, interactive features, and personalization. While JavaScript (JS) is great for user experience (UX), it’s problematic for search engine crawlers because they have difficulty fully parsing and understanding the rendered JS content.

This complexity means search engine crawlers demand a larger crawl budget to crawl and index JS-based content. Consequently, there’ll be little left of your crawl budget. Pages with complex JavaScript can also take longer to load fully, and that’s a known negative ranking factor for search engines.

SOLUTION

There are a couple of strategies you could use to tackle JavaScript eating up your crawl budget, from minifying JavaScript to using server-side rendering (SSR)—but the best method for JavaScript crawl budget optimization in ecommerce sites is prerendering JavaScript.

Prerendering, using a tool like Prerender, means preparing a static version of your content. Essentially, Prerender renders your JS content ahead of time and feeds this static version to crawlers. This brings in several technical SEO benefits for your ecommerce site:

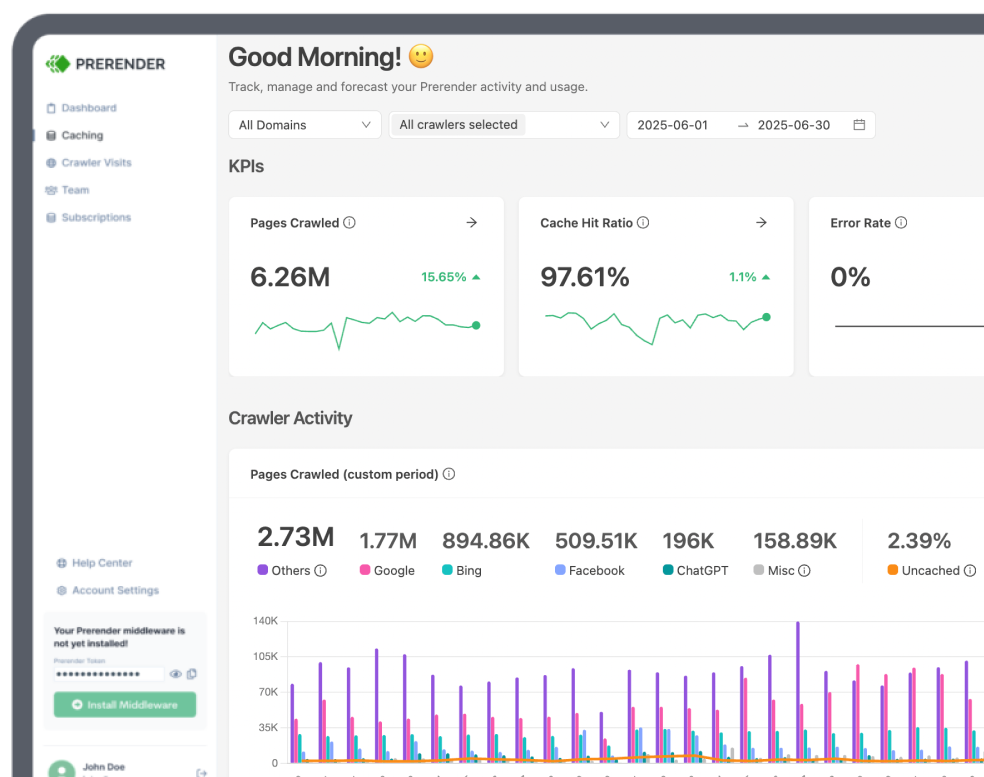

- Save Your Valuable Crawl Budget

Since Prerender renders your JS content, Googlebot will use less crawl budget to process your pages, saving your crawl limit.

- No More Missing Content

Each of your SEO elements and other valuable product information will be 100% indexed, even if the pages rely heavily on the most complex JavaScript code.

- Faster Response Time

Prerender not only solves the JavaScript SEO for ecommerce problems, but it also solves the slow page load issue by increasing your server response time (SRT) to less than 50 milliseconds.

Crawl rate optimization is vital for SEO success in ecommerce sites built with JavaScript, and Prerender makes it easy. The best part is you can get started with Prerender now for free!

Related: How is prerendering different from other rendering options? Learn here.

Fast Ecommerce Indexation Starts with Optimized Crawl Budget

A healthy crawl budget is the foundation for robust organic visibility, so you need to manage your crawl budget wisely. By understanding the crawl budget drainers specific to ecommerce and implementing the optimization strategies outlined in this article, you can take back control.

To recap, the main causes of ecommerce requests that drain your crawl budget are duplicate content, infinite scrolling, and unoptimized Javascript content.

The path to reclaim your crawl budget may not be easy, but the result? Improved search engine visibility, increased organic traffic, and ultimately, more customers finding the products they need on your ecommerce website. That just makes everything worth it in the end.

This article outlines several solutions, but if you have difficulty implementing all of them, you can simply use Prerender to solve all of these issues in one go and save your crawl budget. It’s easy to install and use, so you can get started for free now and say goodbye to crawl budget troubles!

Looking for more technical SEO for ecommerce best practices? These blogs may interest you: