Pagination is one of those website components wrapped in mysteries, misconceptions, and myths that can easily break your user experience and, when mishandled, hurt your website’s SEO.

This is especially true after Google announced it was dropping the rel=prev/rel=next attributes as a way to handle paginated pages — which until now was the GOOD way to optimize pagination for SEO.

Instead of using the rel=prev/rel=next to understand the relationship between paginated pages as a series, Google is now looking at every paginated page as a unique page.

The implications for SEO are multiple, but the most important takeaway from this is that on-page optimization best practices should be considered when creating your website’s pagination.

Although there’s no one cut-and-paste solution for every project today, we’ll try to bring some light to the darkness behind pagination and how you can optimize it to improve your site’s architecture for crawling and indexation.

Common Pagination SEO Issues and How to Fix Them

There are many potential issues that can arise from bad pagination handling, but not necessarily from the pagination component itself.

Google is smart enough to understand the relationship between pages — despite having rel=next/prev attributes or not. So for most cases, it’s probably fine as long as you have your user experience at the core of your pagination design.

That said, let’s explore some of the most common issues and myths, and we can make sure that search engines can use them to find and index our content correctly.

Canonical and NoIndex Tags Problems

When creating a category page for products or content, we want our root page (Page No. 1) to be the one ranking on the SERPs and not Page 5 of the pagination series.

However, because now Google indexes every page in the series as a unique page, this is a scenario that you might encounter on your website rankings.

For this reason, a lot of misinformed SEOs have recommended adding a canonical link targeting the root page.

In theory, it does sound like a solid solution. However, with a long-term canonical two things can happen:

- Google stops crawling the pages with the canonical set to the root page because we’re pretty much telling them to do so. If you canonicalize each paginated page, it means that the relevant content is only on the first page, preventing Google from finding deep pages.

- Google will start disregarding your directives because they seem to make no sense. Google is finding unique content in those paginated pages, so it will assume your directives are an error. This can make sending clear signals to Google much harder to do.

Another solution we’ve seen being implemented is adding a NoIndex tag to all paginated pages after the root page to prevent Google from picking these pages over the root page for results.

Although it can be seen as an ingenious solution, it can actually harm the discoverability of deep pages.

Google treats long-term NoIndex-DoFollow directives as a NoIndex-NoFollow combination because we’re basically telling them that these are pages we don’t want to be used in search results at all.

In other words, we’re telling Google to ignore them altogether.

How should we manage canonicals and robot tag directives then?

- The canonical tag for each paginated page should point to itself as for any unique page. Remember that your pagination is an aggregation of content, so each page will display different elements.

- Let Google index paginated pages. These pages are essential for Google to discover pages deep in your site architecture organically.

- Use the URL Inspection tool in Google Search Console (GSC) to confirm Google is using the right canonical URL.

Here’s an exception to have in mind:

If you’re letting users filter the results in your category pages to display different content based on ratings, colors, size, etc. (in other words: sorting), then you might want to add a canonical from these new filter URLs to the main page — which is the original paginated page.

A better solution would be to build this functionality using JavaScript and dynamic URLs (hashtag URLs).

Thin and Duplicate Content

Pagination is mainly used to break down listings of content or products per category, so each page is very similar to the others.

However, it wasn’t an issue before because Google treated these pages as part of a series — which isn’t the case anymore.

There are several ways to optimize paginated pages to avoid getting thin or duplicate content warnings, but — like with most on-page best practices — it’s the user experience that should be driving this conversation.

If you’re breaking down listings, then it’s impossible for all paginated pages to display the same content. At the same time, unless you’re purposely adding paginated pages to manipulate page views, then there won’t be thin content either.

The idea behind pagination is that there are too many elements to be displayed inside one page, so we separate them into several pages.

If we follow this logic, then here’s how to handle thin and duplicate content when creating paginated pages:

- Add as many elements into a single page as possible without affecting loading speed and usability. You’ll have to experiment with your website to find the correct balance between speed and the number of items you can display. If you can add 20 or 40 items into a single page without compromising speed, then do it.

- Do not break down content into several pages just for the sake of it. If there’s no need for pagination, then you shouldn’t use it.

- Change a few page elements for paginated pages. A now-common technique is to unoptimize paginated pages. For example, if the root page’s meta title is “New York Sheets for Sale,” then Page 4’s title could be “Page 4/10 of Sheets for Sale in New York.” This kind of deoptimization can dissuade Google from picking the root page as the best fit for your target keywords.

- Don’t overlap content results in several paginated pages. If you have Items 1 to 10 on Page 1, then Page 2 should contain items 11 to 20 and so on.

- Add extra information to the main category page. By adding an FAQ at the bottom or some informational content to the root page, you’re again telling Google that this is the best page of the series and, therefore, it should use it for ranking purposes.

Following these guidelines will also prevent keyword cannibalization because you’re optimizing all signals to be pointing to the main page of the category.

Ranking Signal Dilution

Because pagination is adding more clicks to pages from the homepage, there’s a loss in authority being passed to deeper pages within the site architecture.

There’s a little less authority with every layer getting passed. So what can we do about it?

There are two main approaches to take to minimize the impact of your pagination:

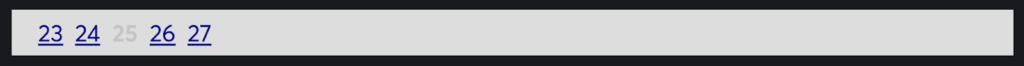

Get Creative With Your Pagination Design and Link Scheme

A study conducted by Portent found that the pagination design, done well, can reduce the depth of the site and thus minimize the impact of pagination on ranking signals.

We recommend you read the full article before committing to a pagination design because there are many interesting ideas to consider.

Of course, there is no BEST solution. It’s all about how long your paginated series is, what kind of experience your users are expecting, and what other functionalities and strategies you have in place.

For most eCommerce with 100 to 200 products, a two-step skip pagination should be enough to improve click depth.

Improve Internal Linking and Architecture

Suppose the core problem is that pages deep into the pagination are way too far from the main pages, like the homepage and root category pages. In that case, the solution is in how we’re structuring our website entirely.

Of course, there are many strategies we can use to improve link equity:

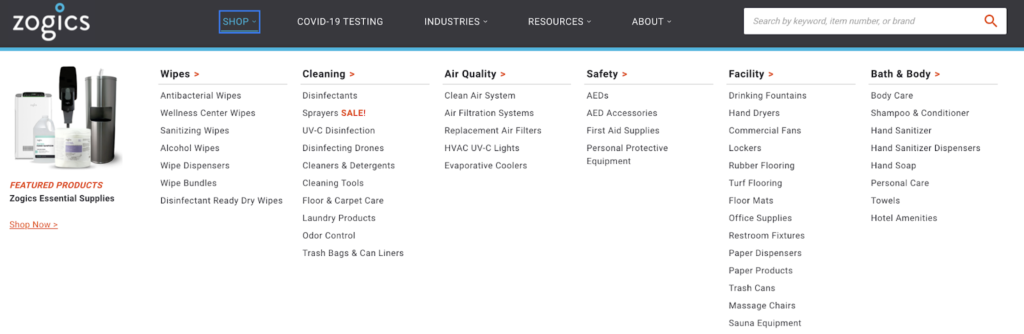

1. Use subcategories to break down huge category pages. Sometimes it’s better to have a lot of subcategories to organize your information instead of hundreds of paginated pages.

This will decrease the depth of pages and keep most unique elements closer to the root category/subcategory.

2. Find pages that can only be discovered within pagination and create internal links to them. You can do this by using Screaming Frog or any other website crawler to find pages that are only getting linked to from paginated pages. After spotting them, find a relevant piece of content to add a link to or just add a link from the root category page.

3. Make sure that the most popular products or priority content can be found on the first page. By displaying the best of the best on Page 1 of your pagination, you’re helping it to rank better in search results and you’re helping your user find the best fit for what they want. SEO and UX win-win.

4. Add deep pages to your sitemaps to help Google find them.

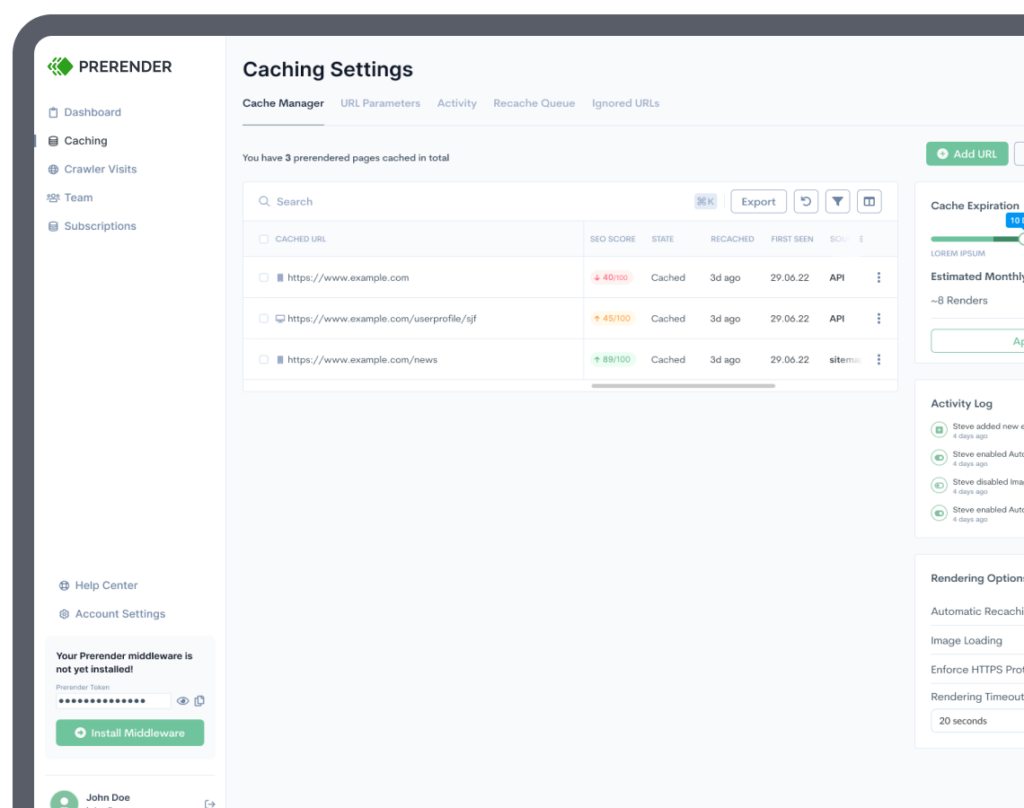

Uncrawlable Pagination Links

As the adoption for JavaScript frameworks like React, Angular and Vue keep rising, optimizing JavaScript for SEO has become a core task for every project.

Links are some of the elements that we’ve seen being mismanaged the most.

Unlike most people believe, Google is able to see dynamic content. This is thanks to the addition of a renderer in the indexation process. This component executes JavaScript files to obtain the content.

However, it is not a headless browser. In other words, Google won’t have access to any content that loads until after a user interaction.

Do you see where we’re heading now?

Google bot can’t follow any link that’s behind an event like:

<a onclick=”goto(‘https://domain.com/product-category?page=2’)”>

All your pagination links should be an <a> tag with an href attribute. These are the two things Google will look for when following links. If your pagination does not follow this structure, then all of them will be ignored.

Good:

<a href=”https://domain.com/product-category?page=2″>

Avoid:

- Routers

- Onclick events

- Adding the href to other elements like a <span>

Resource: Learn about the most common JavaScript SEO issues

Last Considerations

SEO-friendly paginations are all about creating the best user experience for your users and not about complicated implementations or solutions.

Although we’ve talked about deoptimizing your paginated pages, it is only in terms of SEO content like meta descriptions and meta titles, and not by adding any extra optimizations like FAQs to these pages.

However, these are still unique pages that need to be optimized for user experience. You need to help these pages achieve their goal of making it easier for users to access information from your site.

A few things to remember when building these pages include:

- Mobile friendliness

- Page speed

- UX design

- Page layout

- Filters

If you focus on these outlined principles, then your pagination will benefit your website – and your SEO efforts – instead of hurt it.