Most marketing teams still treat AI search as a channel to monitor. But it should be something more than that, says Niklas Buschner, founder and CEO of Radyant.

Radyant is one of Europe’s leading growth agencies focused on SEO and AI search. He also hosts the Masters of Search podcast, a top SEO podcast where he interviews growth leaders from companies like Wise, Miro, and Eleven Labs.

In this episode of the Get Discovered podcast, Niklas sat down with host Joe Walsh to talk about the real scale of AI search, why your analytics are badly understating its contribution to pipeline, and what it actually takes to measure discovery when the click doesn’t happen.

Watch the full episode below or read on for key takeaways.

AI Search Is Already at 20% of Google’s Volume

The data point that anchors this conversation comes from a study by Ethan Smith at Graphite (or, you can also tune into his Get Discovered podcast directly).

Using SimilarWeb data, Smith compared web traffic to top AI chatbots (ChatGPT, Perplexity, Gemini, and Claude) against Google’s overall traffic volume. His finding so far? AI search is already pulling roughly 20% of classic search volume.

That number will surprise a lot of people, but Niklas isn’t surprised.

“A lot of people still perceive AI search as being hype and being niche,” he says. “And I think this is just not true.”

Google has AI interfaces baked into its own search experience, and cleanly separating “classic Google” from “Google with AI Overviews” in traffic data remains genuinely difficult. But the directional truth is hard to argue. A channel that barely existed three years ago is now operating at significant scale, and it’s only projected to grow.

“You have to look at this new reality and accept its significance,” Niklas says. “And only then you can really see what still holds and what you have to recalibrate.”

Further reading: Industry Study: What 100M+ Pages Reveal About How AI Chooses Your Content

Why Your Analytics Are Understating AI Search Contribution by 15-30x

Even as AI search grows, most measurement systems are capturing almost none of it. But Niklas’s agency has tracked this gap systematically across its entire client portfolio.

They compare two data points: click-based attribution (what Google Analytics or HubSpot records as referred traffic from AI platforms) against self-reported attribution (asking leads and customers directly how they found you).

The gap is a big one. His data shows that analytics tools understate AI search’s contribution to pipeline by 15 to 30 times.

This is structural, he says. For now, AI platforms aren’t incentivized to send users a click. So, the typical user journey looks like this:

- Open ChatGPT

- Ask for a tool recommendation

- Get an answer

- Close the chat

- Type the brand name directly into Google

And by the time the user arrives on your site, it registers as branded organic search or branded paid search. The AI channel is completely invisible.

“The platforms are not really incentivized for a click-out,” Niklas explains. “You end up with a click-based attribution that says paid search. But if you ask people how they found you, they’ll tell you ChatGPT.”

As a result, the companies optimizing based on what their analytics say are likely making systematically wrong decisions about where to invest.

Further reading: How to Get Indexed on AI Platforms

The Three-Layer Attribution Model That Works

The solution Niklas recommends isn’t another attribution tool. It’s a three-layer measurement system that teams can build themselves:

1. Click-based / cookie attribution. Keep running it. Although it’s incomplete, it still provides a useful baseline.

2. Self-reported attribution. Add a free-text field (not a dropdown) to lead forms and post-purchase surveys. Ask: “How did you hear about us?” and let people write whatever they want. The key here is free text over a dropdown. Dropdowns lock you into the channels you already know about. If a new AI platform emerges, a dropdown won’t catch it. And today’s LLMs can analyze hundreds or thousands of open-text responses in minutes, so the “it’s too hard to analyze” objection no longer holds.

3. Sales-reported attribution. Train your sales team to ask on calls: “Quick question: where did you first hear about us? What were you looking for?” This layer adds topical context that no form can capture. When a prospect tells a sales rep they were searching for a solution to a specific problem, that’s attribution data, a content signal, and a positioning insight all at once.

Niklas is clear that this is about the directional truth, not perfect data.

“I try to have a way more scrappy and less sophisticated view on that, because I do not see companies making more progress just based on a super sophisticated attribution tool they have bought.”

Why the “Traffic Going Up” Heuristic No Longer Works

For years, SEO reporting relied on a simple, convenient signal: if traffic was growing in Google Search Console, SEO was working. A blue line going from the bottom-left to the top-right was easy to show in a board meeting.

“That was never the right way to assess the success of SEO based on growing traffic,” Niklas says. “But it was very easy to look at. And very convenient.”

Attribution has changed. This isn’t because the underlying business questions have changed, but because the easy proxy metric no longer works the way it did. The response shouldn’t be paralysis or a $30K attribution platform. According to Niklas, this should inspire us to return to first principles: what business outcome are you actually trying to drive? First, count the real outcomes and second, understand, directionally, where they’re coming from.

For example, companies made advertising decisions for decades before Google Analytics existed. They used market research, asked customers, and tested out marketing methods with no way of precisely attributing them. That same approach, combined with today’s ability to analyze open-text responses at scale, is more than enough to make sound channel investment decisions.

Further reading: Why the Golden Era of Content is Over – A Conversation with Ryan Law

The Biggest Misconception About AI Search Right Now

When asked about the misconceptions he encounters most often, Niklas returns to scale.

Many marketing leaders still believe AI search is a fringe channel that inflated during the hype cycle and will settle back to marginal significance. He thinks that belief is costing companies real pipeline.

A second misconception is more technical but equally consequential. People ask ChatGPT why it isn’t showing their website, receive an answer they don’t understand, and conclude the whole system is a black box. In fact, Large Language Models still retrieve information from the web. They use indexes, and the content quality, authority signals, and technical foundations that made a site visible in classic search still matter. But now, they’re just filtered through an additional layer of AI reasoning about what’s most relevant to surface.

“A lot of stuff that helped with SEO also helps with AI search. But you have to accept the significance of this new reality first.”

Further reading: Technical SEO for AI Search

What the Next 12–18 Months Look Like

When asked what gets worse before it gets better, Niklas points to the job market. Companies feeling pressure to automate will push further and faster than is sustainable, before the reality of where human judgment remains necessary reasserts itself.

He recommends checking out Anthropic’s research on the economic impact of AI on the job market. More specifically, a chart showing the theoretical capabilities of AI models versus the extent to which those capabilities are actually in use today. The gap is significant, and it’s not clear it closes as quickly as many assume. More capability doesn’t automatically translate to deployment at scale.

On AI search specifically, he’s optimistic about measurement improving and user behavior continuing to shift. The share of discovery happening outside Google won’t shrink. The question is whether marketing teams build the infrastructure to track it before they’re left guessing.

“I am 100% convinced that it will get better over time. It’s just a matter of how we use the technology and what we use it for.”

Key Takeaways

- AI search is already at significant scale. Data from Graphite estimates it’s already pulling roughly 20% of classic Google search volume.

- Your analytics don’t tell you the full story. Radyant’s client data shows click-based attribution understates AI search’s contribution to pipeline by 15 to 30 times.

- Build a three-layer attribution system. Combine click-based attribution, self-reported attribution (free text, not a dropdown), and sales-reported attribution for a directional picture you can act on.

- Free text over dropdowns. A fixed dropdown will miss new AI channels and discovery patterns. Let people tell you how they found you in their own words.

- SEO fundamentals still apply. LLMs still rely on search indexes, technical foundations, content quality, and authority signals.

Don’t wait for perfect attribution. The companies making progress are the ones moving with imperfect data, rather than waiting for a system that will give them certainty.

Tune Into the Full Conversation

Listen to the full episode of the Get Discovered podcast wherever you get your podcasts. Subscribe so you don’t miss future episodes with marketing and SEO leaders navigating the AI discovery challenge in real time.

To connect with Niklas, visit Radyant or find him on LinkedIn. His podcast, Masters of Search, is available on YouTube and wherever you listen to podcasts.

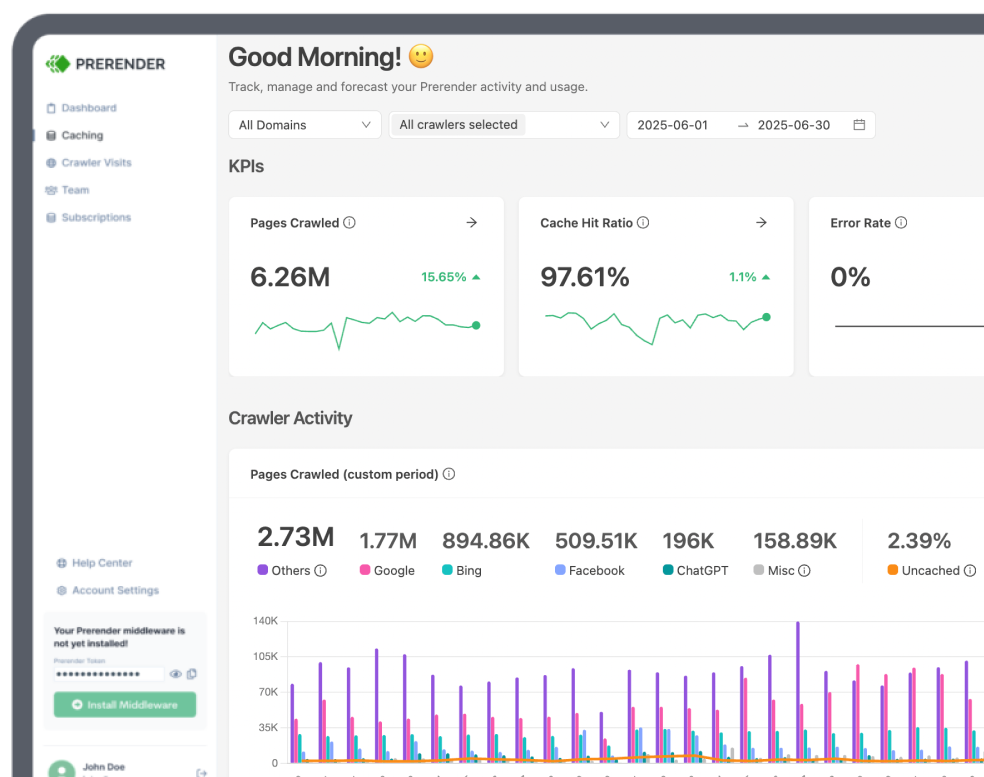

About Prerender.io

Prerender.io is a leading SEO solution that helps modern websites ensure their JavaScript-heavy pages are fully visible to search engines and AI tools. Trusted by companies like Microsoft, Salesforce, and Walmart, Prerender is the go-to partner for businesses navigating the future of SEO and AI-driven discoverability. Start for free today.