If your website still can’t clearly communicate with AI agents, it’s effectively invisible to AI search engines like ChatGPT, Claude, and Gemini. As AI-driven discovery continues to demonstrate its dominance over traditional search, building AI-agent-friendly websites is a core requirement to stay visible in today’s AI-first search landscape.

In this guide, we’ll walk through the shift toward the agentic web and the technical principles of web architecture needed to build an AI-agent-friendly website. By the end, you’ll understand how to create an AI-first website that supports natural language interfaces, structured data consumption, and programmatic web access—ensuring your site serves both human users and the growing ecosystem of AI agents and crawlers.

TL;DR: The Agentic Web Explained and How to Build One

- The agentic web is action-driven, not page-driven

In the agentic web, autonomous AI agents don’t just index content—they understand context, evaluate options, and execute tasks on behalf of users. Websites must expose structure, intent, and actions in a way machines can reliably understand. - AI-agent-friendly websites use dual-interface design

This means maintaining a human-friendly UX while exposing machine-readable interfaces through semantic HTML, structured data, and programmatic web access (APIs, sitemaps, schemas). - JavaScript sites must be prerendered to be visible to AI agents

Most AI crawlers can’t execute JavaScript. Tools like Prerender.io serve static HTML snapshots to AI agents while preserving the full JavaScript experience for humans, making it the fastest path to AI visibility.

What Is Agentic Web?

The agentic web describes how websites are increasingly consumed not just by people in browsers, but by autonomous AI agents. Instead of loading pages and parsing markup loosely, these agents interact with websites as systems, expecting structured data, predictable behavior, and clearly defined capabilities.

To put it simply, unlike traditional web crawlers that index documents, AI agents understand context and execute tasks.

Traditional Search Engine Crawlers vs. Agentic Web AI Agents

The key difference between old search engine crawlers and AI agents lies in their autonomy:

- A search engine crawler visits your site, indexes content, and leaves.

- An AI agent might visit your site, understand your product catalog, compare prices with competitors, and complete a purchase on behalf of a user. All without that user ever seeing your homepage.

Microsoft’s chief of communications has described this agentic web transformation as a shift from information retrieval to an action-oriented framework. Users issue high-level goals, while agents autonomously plan, coordinate, and execute.

“We envision a world in which agents operate across individual, organizational, team and end-to-end business contexts. This emerging vision of the internet is an open agentic web, where AI agents make decisions and perform tasks on behalf of users or organizations.” – Frank X. Shaw

This shift to an agentic web means that today’s websites need to expose their structure, intent, and actions in a way that autonomous AI agents can reliably understand and use. That’s why dual-interface design is necessary. It separates human experience from machine access, allowing websites to serve both without compromise.

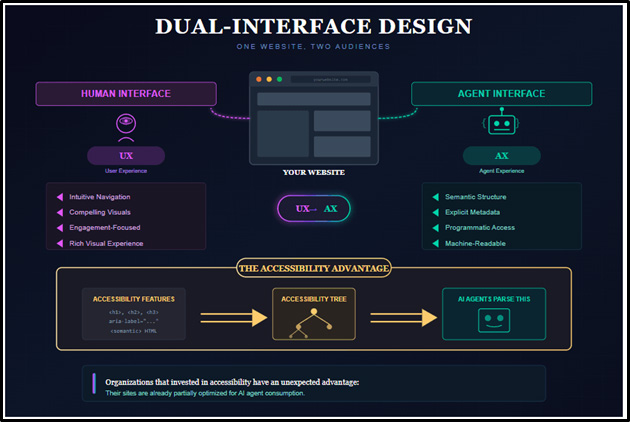

What’s Dual-Interface Design in Agentic Web?

Dual-interface design is the website architectural approach that enables building AI-agent-friendly websites. The concept is straightforward: maintain rich visual experiences for humans while providing a parallel layer optimized for machine comprehension.

For human visitors, websites continue delivering intuitive navigation, compelling visuals, and engagement-focused experiences. For AI agents, the same website exposes content through semantic structure, explicit metadata, and programmatic web access points. Design leaders describe this transition as moving from UX (User Experience) to AX (Agent Experience).

Why Building AI Agent-Friendly Websites Matters Now

Building AI agent-friendly websites has become a business-critical priority. AI-powered systems now generate a significant share of all internet traffic, marking a fundamental shift in who, or what, consumes web content.

According to Imperva’s Bad Bot Report, AI agents account for 51% of all internet traffic in 2025. This means that more than half of your website’s visitors aren’t human, and that number is expected to increase in 2026.

And the implications extend beyond raw traffic numbers. Gartner predicts that by 2028, 33% of enterprise software will include agentic AI capabilities, with 20% of customer interactions handled by AI agents rather than humans. These agents don’t just browse, they book appointments, compare products, execute purchases, and complete complex workflows without human intervention.

How to Build AI Agent-Friendly Websites (7 Elements of Agentic Web)

1. Semantic HTML5 and Structural Design

Semantic HTML5 is foundational to building AI-agent-friendly websites because it defines how machines understand structure, intent, and hierarchy. Semantic elements such as <header>, <nav>, <main>, <article>, <section>, and <footer> act as architectural signals, helping AI agents determine what content matters, how pages are organized, and where actions begin and end.

A page built with generic <div> and <span> tags provides little context to a machine. When your website uses proper tags, AI tools instantly identify the most important content and where to find it.

Adopting semantic design means labeling every major page section with appropriate HTML elements. It also means using descriptive text for interactive elements, like “Download Full Report” or “Subscribe to Newsletter” rather than “Click Here.”

For icon-only buttons or complex interactive elements, ARIA (Accessible Rich Internet Applications) attributes provide invisible hints that tell machines what each element does, without affecting visual design.

Semantic structure establishes the foundation, but AI agents also need to discover, traverse, and reason about content across your site. That requires treating site architecture itself as part of your AI agent interface.

2. Site Navigation, Accessibility, and Discoverability

Beyond semantic tags, site navigation and architecture determine whether AI agents can reliably discover and traverse your content. In an agentic web, navigation is not just a UX concern—it is part of your machine-readable interface.

AI systems don’t browse visually. They follow links, parse the document object model (DOM), and consume structured signals through sitemaps and APIs. Well-designed navigation enables programmatic web access, improving both AI agent consumption and traditional SEO. This is a core pillar of dual-interface design, where human navigation and machine discovery coexist without conflict.

You can make your site more navigable for AI agents by:

- Using descriptive URLs

Ensure your page URLs are readable and meaningful to AI agents, not cryptic URLs with random IDs or parameters that can appear like spam and confusing AI agents. It’s better to include descriptive keywords in the URL (e.g. …/pricing or …/product/iphone15 instead of …/prod?id=1234).

- Maintain an up-to-date XML sitemap

An XML sitemap exposes your site’s structure as a machine-readable interface. Keeping it current allows AI agents and search engine crawlers to efficiently discover, prioritize, and revisit important pages. Any significant content change should be reflected in the sitemap to preserve reliable programmatic web access. You can use this free XML sitemap generator tool to get started.

- Avoid frequent layout overhauls

Structural stability matters for AI agents. Constant changes to navigation patterns, page hierarchy, or internal linking can break previously learned paths. When agents lose reliable access points, they may misinterpret content or temporarily drop your site from results while relearning the structure—an avoidable risk in dual-interface design.

- Don’t hide critical content behind scripts or logins

Many modern websites rely on heavy JavaScript, dynamic UI components, or multi-step interactions to reveal content. This creates barriers for AI agents, which often cannot execute complex client-side logic or navigate interactive flows.

Even with strong AI agent architecture, there’s a critical limitation: most AI crawlers cannot execute JavaScript. If your site depends on client-side rendering to expose core content, it may be invisible to AI agents—regardless of how well other machine-readable interfaces are implemented.

3. Prerendering JavaScript Sites for AI Visibility

Most AI crawlers cannot execute JavaScript. If your site is built with React, Angular, Vue.js, or any framework that relies on client-side rendering, AI agents will see a blank page. This means that your website (including product details, pricing, reviews, and all other content loaded via JavaScript) is completely invisible to ChatGPT, Claude, and Perplexity. don’t exist to these systems.

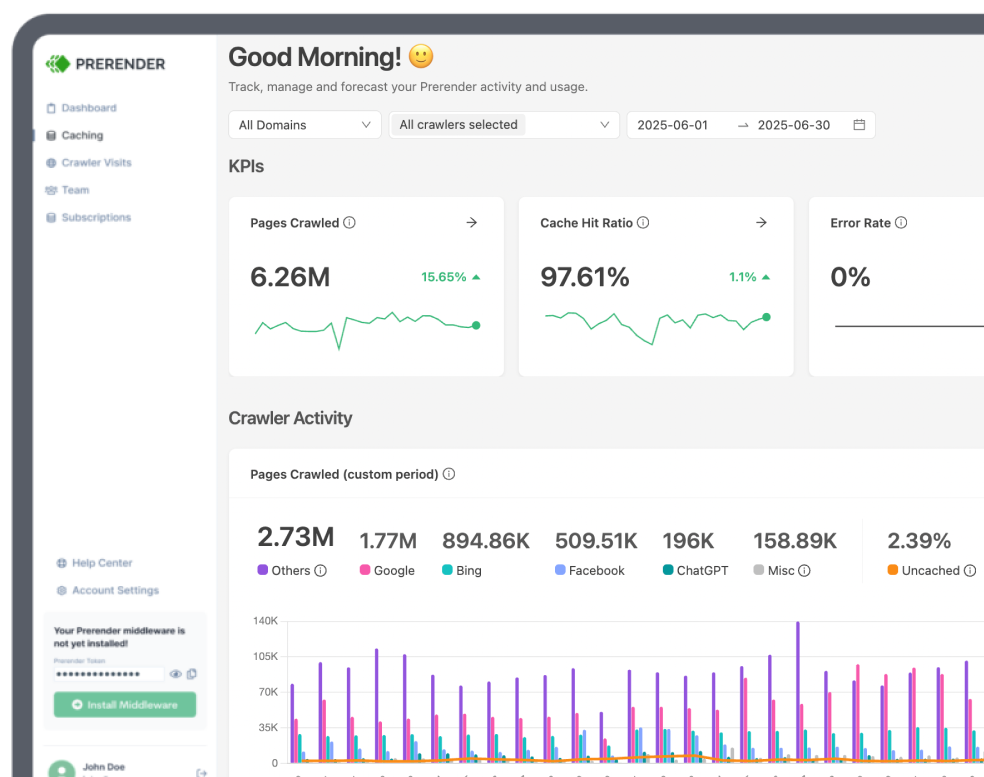

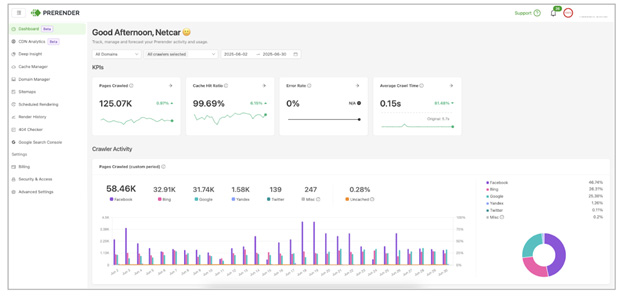

Dynamic rendering with Prerender.io solves this by converting JavaScript-heavy pages into static HTML that AI crawlers can read. When a crawler visits, Prerender.io detects it and serves a pre-rendered HTML snapshot, while human visitors continue receiving the full JavaScript experience—the perfect dual-interface design solution that an AI agent friendly website needs.

The benefits of using Prerender.io when building AI agent-friendly websites include:

- AI crawler compatibility: major AI search crawlers, such as ChatGPT, Claude, and Perplexity, access your content as clean HTML.

- Structured data visibility: schema markup embedded in JavaScript applications becomes accessible to AI systems.

- Faster JS content crawling: crawlers receive instantly loadable HTML, improving crawl efficiency.

- 100% JS content indexing: AI crawlers can easily see and pull any JavaScript generated content and serve it to users.

Prerender.io works with all popular JavaScript frameworks and integrates at the CDN, web server, or application level. And you don’t need to change your techstack to adopt prerender. Simply finish the 3-step installation process and your website becomes more AI friendly. For JavaScript-heavy sites like e-commerce platforms, SaaS products, or single-page applications (SPAs), get started with Prerender.io now for free!

Learn how to ensure your site gets cited on ChatGPT and other AI powered search platforms.

4. Implementing Structured Data (Schema Markup)

If semantic HTML labels the rooms in your website “house,” structured data provides a detailed index of the contents. Schema markup uses standardized vocabulary from Schema.org that Google, Bing, ChatGPT, and other AI tools recognize.

Schema acts like a hidden label telling AI exactly what content represents—whether it’s an article, product, review, event, or FAQ. While visitors see only your meta title and description, AI agents see much more with proper schema markup.

Key schema types to consider:

- Product: Pricing, features, availability, reviews

- SoftwareApplication: Features, platforms, reviews (ideal for SaaS)

- FAQPage and HowTo: Help AI agents pull answers directly into summaries

- Event: Dates, times, locations, registration links

- VideoObject and ImageObject: Metadata for media content

- Dataset: Publication date, licensing, categories for research content

New to schema markup and needs more tutorials on how to add them to your site? Check out this structured data guide.

Implementation uses JSON-LD scripts embedded in HTML. Verify existing schema using Google’s Rich Results Test. Structured data helps AI agents read and understand content. But what if you want them to interact directly with your services? That requires APIs.

5. API-First Architecture for AI Agents

While structured data lets AI read content intelligently, APIs allow agents to interact with your functionality directly. Without APIs, agents must scrape your site, interpret inconsistent layouts, and guess at data meanings. With APIs, you provide direct access to product information, availability, pricing, and documentation. This approach is faster, more accurate, and more likely to be referenced by AI tools.

If you provide an API sharing your feature list, pricing tiers, or integrations, an AI agent responding to “What SaaS tools integrate with Notion?” could include your product—without the user visiting your homepage.

What to aim for:

- JSON format: The standard data format AI tools prefer

- Documentation: Clear guides explaining available data and request methods

- Stability: APIs shouldn’t change without notice; use versioning

- Security: Proper access controls managing permissions

Pro tip: document your APIs using OpenAPI specification to enable AI agents to dynamically discover endpoints and construct valid requests.

For organizations fully embracing the agentic web, the Model Context Protocol (MCP) represents the emerging universal standard for AI agent integration. Introduced by Anthropic in 2024 and adopted by OpenAI, Google, and Microsoft, MCP provides a standardized framework for connecting AI systems with external data sources, like a USB-C port for AI applications.

APIs and structured data handle technical infrastructure, but the content itself matters too. How you write and present information affects how well AI systems interpret and use it.

6. Content Optimization for AI (Natural Language Processing and Media)

It’s not just the back-end structure that matters. The way you write affects how well AI can interpret content. AI agents use natural language processing to derive meaning, so creating conversational interfaces that boost clarity matters.

Plain, unambiguous language is easier for AI to parse and cite. This doesn’t mean dumbing things down, it means:

- Avoiding unnecessary jargon

- Explaining concepts clearly

- Structuring content logically.

For example, instead of “Event Details: Date: March 15, Time: 6 PM, Location: Main Hall,” use “Join us on March 15 at 6 PM in the Main Hall.” The second version is clearer and more likely to be correctly parsed by AI systems.

Clear content only helps if it’s accurate. AI agents that retrieve outdated information create problems for everyone, which brings us to data maintenance.

7. Ensuring Data Accuracy and Real-Time Updates

An often-overlooked aspect of building AI-friendly websites is maintaining accurate and up-to-date data on your site. AI agents may cache information or rely on data from their last visit. Stale content means AI might serve outdated information to users, so ensure you:

- Sync with real-time databases: for volatile data such as inventory or pricing, retrieve from live databases whenever anyone, human or AI, requests it.

- Provide timestamps: show last-updated dates on content pages or via API responses. AI agents can assess freshness, and some systems prioritize recent content.

- Verify and monitor accuracy: audit exposed information periodically. Test queries using AI chatbots to verify your site returns accurate results.

Opening your site to AI agents creates opportunity, but also responsibility. As you make more data accessible, security and governance become critical.

Data Governance and Security for AI Agent Access

With greater data openness comes greater responsibility. Autonomous agents may interact with sensitive site areas when placing orders or accessing user data, so you need to put the following safeguards in place:

- Authentication and authorization: APIs allowing transactions or non-public data access should require secure authentication (OAuth tokens, API keys). Distinguish between public endpoints and private actions.

- Rate limiting and abuse prevention: AI agents operate faster than humans, risking system overload. Implement rate limits on APIs and consider bot-detection for website traffic.

- Data encryption: use HTTPS everywhere. Encrypt stored data at rest.

- Privacy compliance: if AI agents access personal data, ensure GDPR, CCPA, and other regulatory compliance. Document what data AI services can fetch.

- MCP security considerations: security researchers have identified MCP concerns, including prompt injection risks, tool permission scope issues, and credential management challenges.

These principles apply across industries, though implementation priorities vary by business model.

Final Thoughts on Building AI Agent-Friendly Websites

AI agents are already navigating the web and making decisions for users. Optimizing for them is no longer optional.

If your SEO foundations are solid, focus on integrating APIs, structured data, and clear architecture so agents can retrieve information easily. If not, start with the basics: clean structure, semantic markup, and fast performance.

For JavaScript-heavy sites, one step makes an immediate difference: dynamic rendering with Prerender.io. Most AI crawlers can’t execute JavaScript, which means your content may be invisible to ChatGPT, Claude, Perplexity, and other AI platforms, even if you rank well on Google.

Prerender.io serves pre-rendered HTML to AI crawlers while delivering the full JavaScript experience to humans. It’s the fastest path to AI visibility for modern web applications.

Just as mobile responsiveness became essential a decade ago, AI agent readiness will define the next generation of successful digital platforms. Organizations that prepare now will thrive in an increasingly AI-mediated economy.

Ready to make your website visible to AI agents? Start with a free Prerender.io account and see the impact firsthand.