Implementing SSR for enterprise SEO fixes many of the visibility issues caused by JavaScript, which is why it’s often treated as the go-to solution for large-scale websites. But rendering content on the server is only one piece of the puzzle, especially if your goal is to improve both search and AI discovery.

The limitations of SSR usually show up after deployment. As Googlebot scales its crawling, your origin server (now responsible for rendering every request in real time) starts to introduce latency. That slowdown becomes a signal: crawl rates drop, and pages that are technically correct get indexed less frequently, or not at all. At the same time, cache invalidation delays can prevent updated content from reaching Googlebot for hours or even days.

None of this means SSR was the wrong approach. It means it solves a specific problem, while enterprise SEO challenges extend beyond rendering. This article covers where those gaps appear, what causes them at the infrastructure level, and how dynamic rendering fills them without requiring changes to your SSR architecture.

TL;DR – Why Enterprise SEO Can Still Break After SSR

- SSR fixes how your JavaScript pages are rendered, but it doesn’t fix how reliably crawlers find, index, and return to them at scale.

- The bigger your site, the more crawler traffic competes with real user traffic — and the more your server overhead affects how often Googlebot shows up.

- When content changes faster than your cache refreshes, Googlebot sees outdated pages. That’s a freshness problem, not a rendering problem.

- Enterprise sites are rarely built on one uniform tech stack. Rendering inconsistencies on pages outside your core SSR setup are more common than most teams expect.

- Not all AI crawlers execute JavaScript. Missing HTML content on any page is content that does not surface in AI-generated answers.

- Dynamic rendering handles what crawlers see at the infrastructure level, independently of your SSR setup, without touching your application.

What SSR Fixes for Enterprise Sites (And the Visibility Gaps It Leaves Behind)

To understand where SSR falls short, it helps to be precise about what it actually does.

SSR executes JavaScript on the server and delivers fully rendered HTML to the client on the initial HTTP response. The browser receives a complete document it can parse and display immediately, rather than a near-empty HTML shell that JavaScript then populates after load.

Before SSR, client-side rendering (CSR) was the default for JavaScript-heavy applications. Googlebot would arrive at a page, receive a shell with a single <div id="root">

Googlebot does execute JavaScript, but through a secondary rendering queue that can lag behind the initial crawl by days or weeks. Research by Onely found that Google needs 9x more time to crawl JavaScript pages than plain HTML, a direct consequence of this rendering queue. This means that content that only exists after JavaScript rendering may not be indexed promptly, or at all (if Googlebot deprioritizes re-rendering).

SSR eliminates that lag. The rendered HTML is the initial response, and it behaves like a static document from the moment Googlebot arrives. For most dynamic sites, that fix is sufficient, but enterprise sites are on another level. At enterprise scale, SSR’s core assumptions break down in ways that often have nothing to do with rendering quality.

Where Enterprise Websites Break SSR’s Rendering Benefits

SSR was designed for manageable page counts, moderate content change frequency, and an infrastructure that can absorb rendering load. Enterprise sites systematically violate all three. The result is three compounding failure modes.

1. Origin Server Overhead for SSR

Every SSR request requires your origin server to render the page in real time. Under normal user traffic, this is manageable with cache-rendered output, set TTLs, and horizontal scaling. Search crawler traffic behavior breaks this model.

Googlebot crawls aggressively, ignores your traffic patterns, and can hit a large site hundreds of times per hour. Many of those requests land on uncached pages, each one triggering a full render cycle alongside your user traffic.

This creates two problems. First, your server slows down. Second, Googlebot notices. When response times degrade, Googlebot interprets that as a signal to back off and reduces its crawl rate. Google’s own documentation confirms this directly: if server response times are consistently slow, the crawl rate limit decreases to avoid overloading the server.

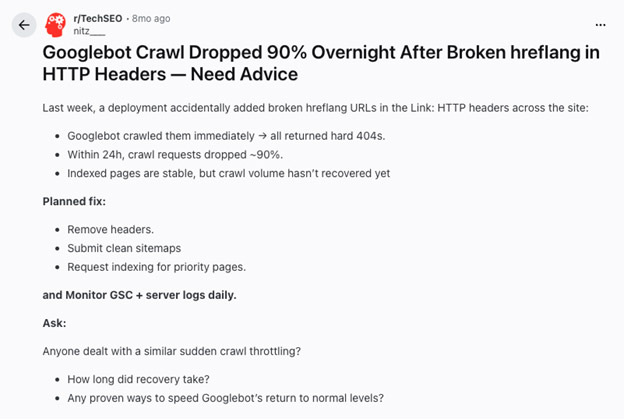

In documented cases, such as one reported by Search Engine Journal, server-side errors have caused crawl rates to drop by 90% within 24 hours (see this Reddit post, for instance). Pages that would have rendered correctly never get indexed, not because of a rendering bug, but because the crawler stopped before reaching them.

You’re then stuck choosing between two bad options: throttle Googlebot to protect performance, or over-provision your website’s infrastructure specifically to absorb crawler load. Neither fixes why crawler traffic competes with user traffic in the first place.

2. Cache Invalidation Lag: Why Googlebot and AI Crawlers See Stale Content

The practical response to SSR rendering overhead is caching: render each page once, store the output, and serve it for subsequent requests. The caching problem in JavaScript surfaces when content changes.

Enterprise sites have dozens of content types sitting behind multiple caching layers in sequence: application cache, CDN edge, and reverse proxy. A content update has to invalidate all of them correctly.

For a single page edit, this is manageable. For a bulk catalog update that touches hundreds of thousands of URLs, it frequently isn’t. The database updates, but stale HTML persists at the CDN or reverse proxy because the invalidation signal did not fully propagate, or because a bulk update triggered partial invalidation that missed a subset of affected URLs.

Essentially, Googlebot crawls those pages and receives the old content. From its perspective, nothing changed. But for ecommerce, the gap between a pricing or availability update and Googlebot seeing it is a direct revenue problem. For news and media, where freshness influences rankings, cache staleness degrades indexation quality in ways that surface in traffic.

3. Rendering Inconsistencies Across Page Types

Enterprise sites are rarely architecturally uniform. A large ecommerce site might have category pages in a React SSR setup, product pages on a different system with partial SSR coverage, editorial content on WordPress with a JavaScript frontend, and the localized versions of all of the above built by regional teams with different framework choices.

Pages outside the core SSR configuration fall back to client-side rendering. This affects more page types than most teams expect:

- Legacy pages never converted during the SSR migration

- Third-party embedded content where the vendor controls rendering

- Localized pages built by teams with different technical standards

- Campaign and landing pages built outside the main application

- Faceted navigation and filtered listing pages, where dynamic URLs create rendering edge cases

SSR coverage of 80% of page types does not solve 80% of the rendering problem. The pages that fall back to client-side rendering often represent a disproportionate share of strategically important content: high-intent landing pages, localized product catalogs, and faceted search results.

These three enterprise SEO SSR failure modes compound each other. But even on pages where SSR is working perfectly, there is a separate problem: the distinction between a page rendering correctly and a page actually getting indexed.

Why Your Pages Are Still Not Indexed After SSR Implementation

SSR ensures a page renders correctly when a crawler requests it. It does not ensure that Googlebot reaches the page, requests it at a useful frequency, or receives current content when it does. Rendering, crawling, and indexation are distinct processes.

Crawl Budget: The Allocation Problem

Crawl budget is the number of pages Googlebot will crawl on a given site within a given period. Google allocates it based on historical crawl rate, server response times, content freshness, and signals about how valuable the site’s content is. A five-million-URL site will not have all five million pages crawled daily, because Googlebot prioritizes, and your infrastructure influences that prioritization with every request.

Top tip: follow these tips to optimize your site for better crawl budget spend.

Of all the infrastructure signals Google weighs, server response latency is the most punishing, as it directly degrades crawl budget. When SSR rendering overhead slows your origin server’s response, Googlebot observes that latency and reduces its crawl rate. Fewer pages get crawled per budget cycle.

Cache staleness compounds this. When Googlebot re-crawls a page and finds the same content it previously saw, it may reduce the page’s crawl frequency and reallocate budget elsewhere. The pages at the bottom of Googlebot’s priority list tend to be the ones that most need fresh indexation: long-tail product pages, localized variants, filtered category pages, and newly created content. These are also where ecommerce and publishing revenue are concentrated.

Content Freshness Problem: Why SSR SEO Doesn’t Solve it

For frequently updated sites, the gap between a content update and Googlebot seeing it is a measurable SEO problem. In ecommerce, product pages showing outdated pricing or availability send poor quality signals to Googlebot, and pages that consistently show stale information can lose rankings relative to competitors with stronger freshness signals. In news and media, freshness is a direct ranking factor. Stories indexed promptly after publication rank better for time-sensitive queries than stories where indexation lags by hours.

SSR alone does not close the freshness gap if cache invalidation is unreliable. A page may render correctly on the next crawl, but if that crawl happens three days after the update, the freshness advantage is already gone.

Cache staleness and crawl budget are problems Googlebot can at least partially recover from over time. The indexation gaps affecting AI crawlers are permanent and growing in business impact as AI search becomes a primary discovery channel.

SSR for AI Visibility: Solving the LLM Content Extraction Gap

Enterprise SEO has historically centered on Googlebot. The current landscape is different, and the numbers are moving fast.

According to Cloudflare’s 2025 Year in Review, AI bots now account for 4.2% of all HTML requests across the web. GPTBot alone grew 305% in request volume between May 2024 and May 2025. Overall, AI and search crawler traffic grew 18% year-over-year. These crawlers are actively indexing your site right now, and most of them cannot execute JavaScript.

AI-powered search products from OpenAI, Perplexity, Google, Anthropic, and others now index and cite web content directly in generated answers. The crawlers behind these systems have fundamentally different technical characteristics than Googlebot, and SSR compatibility with them is not guaranteed.

Why Most AI Crawlers Cannot See Your JavaScript Content

Most AI crawlers do not execute JavaScript, and this detail is critical for SSR.

When Googlebot visits your site, it makes two passes: an initial crawl of the HTML response, then a secondary rendering pass where it executes JavaScript and indexes the resulting DOM. AI crawlers make one request. They send an HTTP GET request, receive the HTML response, extract the content, and move on. No secondary rendering pass, no JavaScript execution, no return visit.

What is in the initial HTML response is what gets extracted. What is not in the initial HTML response is invisible.

On pages where SSR is consistently implemented and delivers complete HTML in the initial response, AI crawlers see complete content. On pages where SSR is missing or inconsistent, they see whatever partial content the HTML shell contains, which may be very little.

LLM Content Extraction and Structured Data

LLM content extraction depends on clean, complete, well-structured HTML. This matters most for structured content: product specifications, pricing, ingredients, technical specifications, dates, and authorship.

If a product page delivers an empty <div class="product-specs">

The same applies to schema.org markup in JSON-LD. If your structured data is injected by JavaScript rather than present in the server-delivered HTML, AI crawlers miss it entirely. This affects citation quality and contextual relevance in AI-generated answers, not just traditional rich results in Google Search.

Why Rendering Inconsistencies Hit AI Crawlers Harder

The rendering inconsistencies described above have more severe consequences for AI crawler compatibility than for Googlebot compatibility.

Googlebot has a secondary rendering queue. The process is slow and imperfect, but partial recovery from rendering failures is possible. AI crawlers have no equivalent. A single request returns incomplete HTML, available content is extracted, and the page is not revisited. So rendering inconsistencies that Googlebot eventually works through are ones that AI crawlers never compensate for.

For enterprise sites with inconsistent SSR coverage across different tech stacks, teams, and markets, AI crawlers are building their understanding of your site from whatever incomplete HTML the first request returned. There is no correction pass.

The question is not whether SSR creates a visibility gap. It does. The question is what infrastructure layer can close it without requiring your teams to rebuild their SSR setup.

Prerender.io vs. Server Side Rendering (SSR)

The Prerender.io vs. SSR debate is often framed as a choice between competing approaches, but they operate at different layers, solve different problems, and are designed to work together.

Dynamic Rendering vs. Server-Side Rendering: What Each Approach Does

SSR is an application architecture decision made at the framework level. It determines how your application generates and delivers HTML, with downstream effects on Time to First Byte, client-side hydration, framework compatibility, developer experience, and infrastructure choices.

Prerender.io handles SEO rendering at the edge, intercepting requests by user agent and serving pre-rendered static HTML to crawlers before they reach your application’s pipeline. Human visitors continue through your normal application and receive the full dynamic experience. Crawlers receive complete HTML directly from Prerender’s cache.

The two coexist without conflict because they handle different parts of the same request flow. SSR owns the user experience layer. Prerender.io owns what crawlers see. Neither changes what the other does.

How Prerender.io Closes the Enterprise SEO Gaps

Prerender.io addresses the specific failure modes that make SSR for enterprise SEO more complicated than a standard implementation.

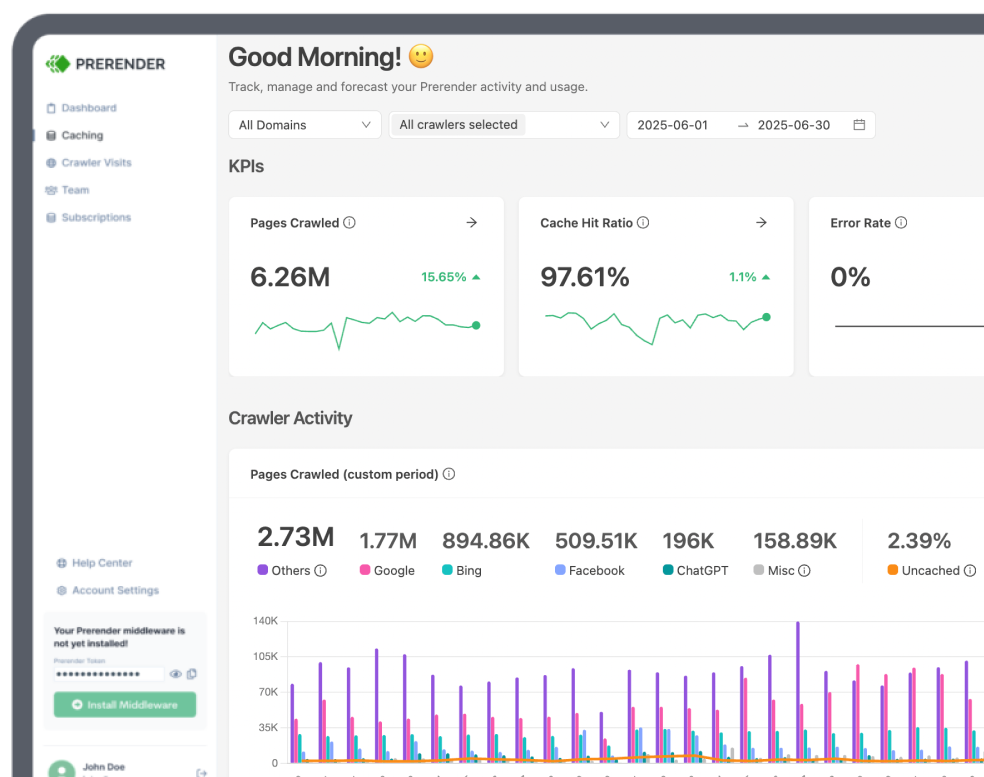

Origin server overhead is eliminated for crawler traffic. Prerender.io serves pre-rendered pages from a CDN layer at an average of 0.03 seconds per request, so Googlebot and AI crawlers receive responses without triggering rendering cycles on your origin servers. The performance comparison to the SSR origin rendering is not marginal.

Because responses come from Prerender’s cache rather than your application, cache freshness is also managed independently of your application stack. You can configure refresh intervals per URL pattern and trigger invalidation on content updates, without depending on your application’s cache invalidation working correctly across every layer. Learn more about cache management in Prerender.io.

Rendering consistency follows from the same architectural separation. Prerender.io operates at the HTTP request layer, not the application layer, so pages that fall back to client-side rendering receive complete HTML for crawlers regardless of whether they were built in React, Next.js, Vue, a legacy CMS, or a third-party platform.

This is what makes Prerender.io a scalable JS rendering solution: coverage expands with your site without requiring framework-level changes.

That consistency is what makes AI crawler compatibility structural rather than incidental. Prerender serves complete pre-rendered HTML from the initial response, so AI crawlers receive complete content regardless of how the page was built. Structured data, product specifications, prices, and any other JavaScript-populated content are present in the HTML they extract.

All of this compounds into crawl budget gains. Faster response times from Prerender.io’s CDN-served pages mean Googlebot observes better server performance across the board, which correlates directly with higher crawl budget allocation.

How Prerender.io Helps Enterprise Websites Win the Google and AI Search

Popken Fashion Group, a retailer running over 30 domains, saw approximately 15,000 additional pages indexed in a single market after reactivating Prerender.io following a brief outage. When the tool was turned off due to an internal technical issue, rankings dropped across markets almost immediately. When it was re-enabled, rankings recovered within a week. Prerender.io was not a supplementary tool in that scenario; it was load-bearing infrastructure.

Haarshop, with 35,000+ products across Dutch and German domains, went from struggling to surface products in SERPs to indexing 10,000 pages per day after implementation, with organic traffic improving by over 50%. Eldorado saw an 80% traffic improvement after fixing SPA rendering issues through Prerender.

These results reflect a consistent pattern: the indexation gap between what a site publishes and what crawlers can see is a revenue gap. Closing that gap does not require rebuilding your SSR architecture. It requires infrastructure that operates at the crawler layer.

Scalable JS Rendering: Why In-House SSR Fails at Enterprise Scale

The “Prerender.io vs. SSR” debate is often framed as a choice between two ways to do the same thing. Technically, that’s true: both deliver rendered HTML to a crawler. But the architectural cost of those two paths couldn’t be more different.

SSR is an application-level burden. It requires your developers to manage Node.js environments, handle complex hydration logic, and constantly patch rendering bugs as your frontend evolves. Prerender.io moves that entire responsibility to the infrastructure layer.

If you are currently running an in-house SSR setup and still seeing indexation gaps, your SSR isn’t “failing”—it’s simply being asked to do something it wasn’t built for: managing massive crawler volume.

- In-House SSR is Fragile: Every code change in your React or Next.js app risks breaking your SSR output. If a component fails to hydrate correctly on the server, Googlebot sees a broken page.

- In-House SSR is Expensive: Scaling an origin server to handle millions of uncached requests from Googlebot and a dozen different AI crawlers requires massive over-provisioning of resources.

- Prerender.io is Decoupled: Because Prerender handles rendering at the edge (the HTTP request layer), it doesn’t care how your application is built. It intercepts the crawler, renders the page in a dedicated headless browser, and serves a perfect HTML snapshot.

Before You Move From SSR to Prerender.io – An Enterprise Checklist

If you’re ready to offload your SEO rendering from your internal team to a managed solution, follow these steps:

- Identify “SSR-Light” Areas: Audit your site for page types (like faceted search or legacy landing pages) where your in-house SSR coverage is spotty. These are your first wins.

- Audit AI Crawler Access: Verify that your current setup is delivering 100% of your structured data and product specs in the initial HTML. If it isn’t, AI search engines aren’t seeing you.

- Update Your Middleware: Prerender.io integrates at the CDN or Middleware layer. This means you can “turn it on” for specific user agents (Googlebot, GPTBot) while keeping your current setup for humans.

- Monitor Your Crawl Stats: Within 72 hours of shifting your crawler traffic to Prerender.io, check Google Search Console. You should see a sharp drop in “Average Response Time” and a corresponding lift in “Total Crawl Requests.”

Fix Your Enterprise SEO Discovery Issues with Prerender.io

SSR SEO coverage solves the rendering problem it was designed to solve. The gaps covered in this article, origin server strain, cache invalidation failures, rendering inconsistencies across page types, and AI crawler invisibility, are infrastructure problems that live at a different layer. They do not go away by improving your SSR implementation. They go away by adding the right infrastructure alongside it.

Every day these gaps persist is a day Googlebot is crawling fewer pages than it should, AI crawlers are building an incomplete picture of your site, and content you have published is not reaching the audiences searching for it.

Prerender.io takes a few hours to install, integrates with any JavaScript framework and most CDN and backend configurations, and starts serving complete HTML to crawlers immediately. There is no code rewrite, no SSR migration, and no ongoing maintenance burden after setup.

The indexation gap between what your site publishes and what crawlers can see is a revenue gap. Start your free trial at Prerender.io today and close it.

FAQs – Adopting SSR to Fix Enterprise SEO Problems

Why Are My Pages Not Being Indexed Even With SSR?

Pages may not be indexed even with SSR because server latency reduces Googlebot’s crawl rate, cache invalidation delays lead to stale content, or certain page types still have rendering inconsistencies.

Check response times under crawler load, confirm cache updates propagate correctly, and audit whether all pages return complete HTML on the initial response.

Why Can’t AI See My JavaScript Content?

AI can’t see your JavaScript content because AI crawlers don’t execute JavaScript—they only extract what’s available in the initial HTML response. Any content rendered client-side, like product details, pricing, or structured data, simply isn’t visible to them.

The fix is to ensure all critical content is included in the server-delivered HTML on the first request. Solutions like Prerender.io handle this by serving fully pre-rendered HTML to AI crawlers without requiring changes to your existing architecture.