For enterprise websites, SEO optimization isn’t about chasing incremental ranking gains on Google SERPs or brand mention on ChatGPT results. It’s about making website visibility predictable at scale.

When a site spans millions of URLs, technical SEO and AEO optimization become infrastructure challenges. Take the JavaScript rendering issues, for instance. JS-heavy frontends and dynamic content mean search engines and AI crawlers can’t see and process the content instantly.

In this guide, we break down six enterprise-grade SEO and AI visibility optimization elements that directly affect product discoverability across search engines and AI systems.

1. Fix JavaScript SEO Rendering Issues in Enterprise SEO

On enterprise websites, critical product information (product prices, availability, and promotions) is often hidden behind JavaScript. Consequently, search engines and AI tools struggle to see content that loads dynamically, especially on large sites where pages change constantly. This lack of visibility makes enterprise SEO optimization difficult, even for teams following SEO best practices.

That said, this is a common problem in enterprise SEO, especially for ecommerce, travel, and marketplace platforms with millions of pages and frequent updates that cover multiple languages. The outcome of this JavaScript SEO challenge is costly and familiar:

- Wrong prices or availability shown in Google search results

- Outdated product information used in AI-generated answers

- Visibility gaps across markets and catalogs

The issue isn’t your enterprise SEO strategy, but your content isn’t fully visible to search crawlers when it matters. Without reliable JavaScript rendering, enterprise SEO optimization breaks down at scale.

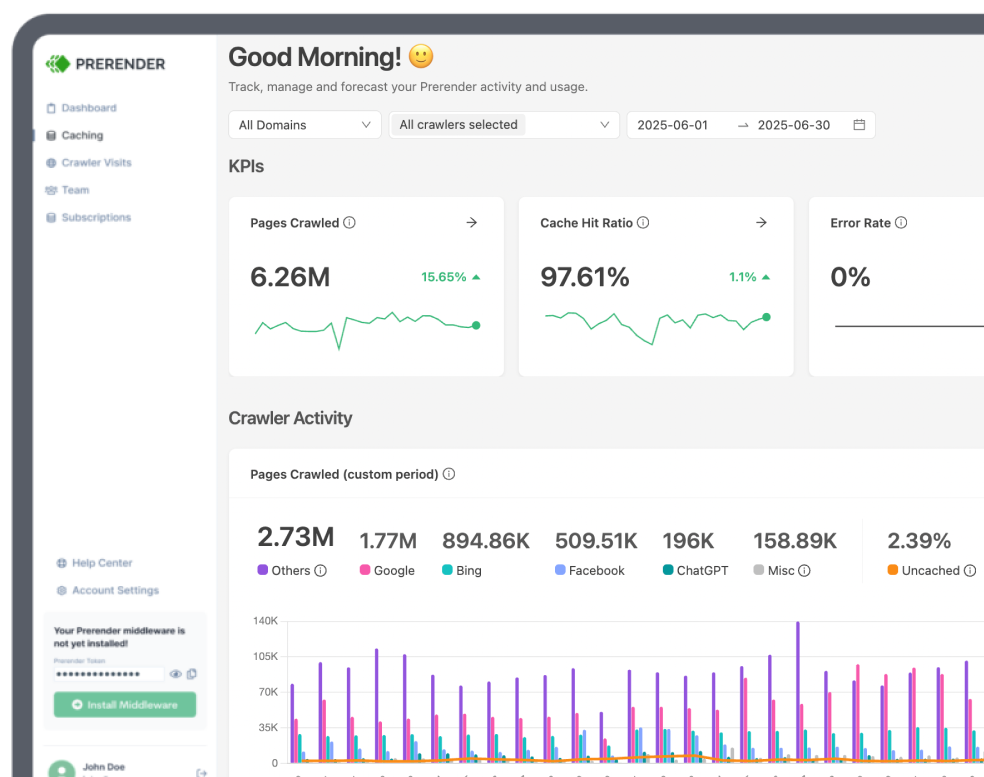

How Prerender.io Keeps Product Pages Visible in Search and AI

Prerender.io helps search engines and AI platforms keep up with frequent content updates by preparing your JavaScript pages in advance and serving them as simple, readable HTML.

Instead of relying on Google or AI tools to load and interpret JavaScript updates on their own (a process that can take days or even weeks), Prerender.io’s dynamic rendering delivers up-to-date versions of your pages immediately. On average, JavaScript websites using Prerender.io load for crawlers in 0.05 seconds, compared to 5.2 seconds without it.

This ensures that search engines and AI systems always access the current state of the page, including prices, stocks, and promotions.

This also means that after using Prerender.io, enterprise websites can expect the following benefits:

- Google sees accurate, current product information

- Rich results are generated from complete page content

- AI tools like ChatGPT, Claude, and Gemini use up-to-date data

With Prerender.io’s help, as your product data changes, search engines and AI can keep pace, instead of falling behind.

Learn how Prerender.io supports enterprise SEO and drives measurable revenue impact.

Popken Boosted Product Visibility Across 32 Ecommerce Domains With Prerender.io

One enterprise customer using Prerender.io to solve JavaScript SEO challenges is Popken. As one of Europe’s largest plus-size fashion retailers, Popken operates 32 ecommerce domains across 16 countries. With millions of JavaScript-driven product and category pages, Popken struggled to keep product stocks, availability, and other information consistently visible in search.

After adopting Prerender.io, the team stabilized rankings across markets, expanded indexation by thousands of pages, and ensured search engines and AI platforms always accessed accurate, up-to-date product information.

When Prerender.io was temporarily turned off, organic visibility dropped almost immediately. Once reactivated a week later, organic traffic bounced back up—clearly showing JavaScript rendering impact to enterprise SEO performance.

Beyond visibility, Popken reduced SEO-related engineering effort, cut tooling costs by 50%, and projects 10% annual revenue growth from improved search and AI discoverability. Read Popken and Prerender.io’s success story.

“When we reactivated Prerender.io, our domain visibility quickly started to increase within the week,” said Christoph Hein, Head of SEO at Popken Fashion Group.

2. Handle HTTP and Status Codes

When it comes to status codes, there are two main things to work on:

Serving HTTPS pages and handling error status codes.

Hypertext transfer protocol secure (HTTPS) has been confirmed as a ranking signal since 2014 when Google stated that they use HTTPS as a lightweight signal. It offers an extra layer of encryption that traditional HTTP doesn’t provide, which is crucial for modern websites that handle sensitive information.

Furthermore, HTTPS is also used as a factor in a more impactful ranking signal: page experience.

Just transitioning to HTTPS will give you extra points with Google, plus increase your customers’ sense of security – thus, increasing conversions. (Note: There’s also a chance your potential customers’ browsers and Google will block your HTTP pages.) So, in saying that, if search engines nor users can access your content, what’s the point of it? And the second thing to monitor and manage is error codes.

Finding and fixing error codes

Error codes themselves are not actually harmful.

They tell browsers and search engines the status of a page, and when set correctly, they can help you manage site chances without causing organic traffic drops (or keep them at a minimum).

For example, after removing a page, it’s ok to add a 404 not found message

(Note: You’ll also want to add links to help crawlers and users navigate to relevant pages.)

However, problems like soft 404 errors – pages returning a 404 error message, but the content is still there – can cause indexation problems for your website. Users will be able to find your page on Google – because it can still index the content – but when they click, they’ll receive a 404 not found message.

You can fix soft 404s by redirecting the URL to a working and relevant page on your domain.

Other errors you’ll want to handle as soon as possible are:

- Redirect chains – these are chains of redirection where Page A redirects to Page B, which then redirects to Page C until finally arriving at the live page. These are a waste of crawl budget, lower your PageSpeed score, and harm page experience. Make all redirects a one-step redirect.

- Redirect loops – unlike chains; these don’t get anywhere. This happens when Page A redirects to Page B, which redirects back to Page A (of course, they can become as large as you let them), trapping crawlers in loops and making them timeout without crawling the rest of your website.

- 4XX and 5XX errors – sometimes, pages break because of server issues or unwanted results from changes and migrations. In situations like these, you’ll want to handle all of these issues as soon as possible to avoid getting flagged as a low-quality website by search engines.

- Unnecessary redirects – when you merge content or create new pages covering older pages’ topics, redirecting is a great tool to pass beneficial signals. However, sometimes it doesn’t make sense to redirect, and it’s best just to set them as 404 pages.

You can use a tool such as Screaming Frog to imitate Googlebot and crawl your site. This tool will find all redirect chains, redirect loops, and 4XX and 5XX errors and organize them in easy-to-navigate reports. Also, it is advised that you look at Google Search Console reports for 404 pages under the Indexing tab.

Once you’ve arrived on the reports page, work your way through the list to fix them.

Is Your Enterprise Website’s SEO and AEO Healthy?

For large websites, improving SEO and AI SEO performance isn’t just about creating new content; it’s also crucial to ensure your older, existing content still brings in visitors. When did you last check how well your entire website performs on SERPs and AI searches?

Get a Free SEO Audit with Prerender.io

Find out how well your enterprise site’s SEO is doing with Prerender.io’s free site audit tool. We’ll show you:

- How easily your site can be found by Google, ChatGPT, and other AI search engines.

- Your page delivery speed.

- Guides to help you fix common technical SEO problems.

Take control of your enterprise website’s SEO health today.

3. Optimize Site Architecture

Your website’s architecture is the hierarchical organization of the pages starting from the homepage.

Image source: Bluehost

For small sites, architecture isn’t the first thing in mind, but for enterprise websites, a clear and easy-to-navigate structure will improve crawlability, indexation, usability from users’ perspective, and scalability.

When you create a good site structure, your URLs will be easier to categorize and manage, thus making it easier for collaborators to create new pages without conflicting slugs or cannibalizing keywords.

Also, search engines will have more cohesive internal linking signals, which will help build thematic authority and find deeper pages within your domain – reducing the chance of orphan pages.

4. Improve Mobile Performance and Friendliness

Mobile traffic has increased exponentially in recent years. With more technologies making it easier for users to search for information, make transactions, and share content, this trend is not slowing down.

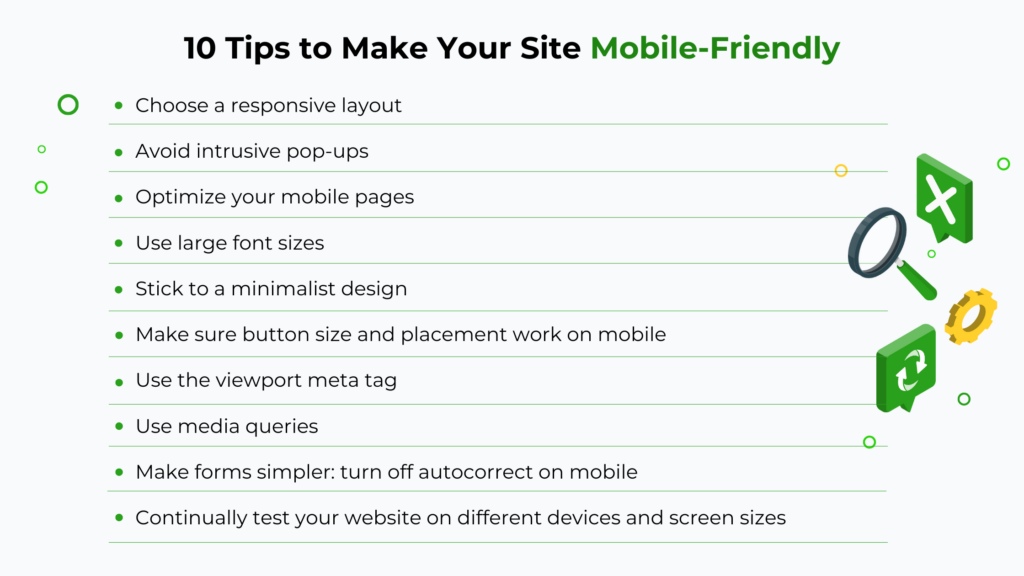

If your site is not mobile-friendly in terms of both performance and responsiveness, search engines and users will start to ignore your pages. In fact, mobile friendliness is such an important ranking factor Google has created unique tools to measure mobile performance and responsiveness, as well as introduced mobile-first indexing.

To improve your mobile performance, you’ll need to pay attention to your JavaScript, as mobile processors aren’t as powerful as desktop computers. Using code splitting, async or defer attributes, and browser catching are good starting points to optimize your JS for mobile devices.

To optimize your mobile site’s responsiveness and design, focus on creating a layout that reacts to the users’ screens (e.g., using media queries and viewport meta tags), simplify forms and buttons, and test your pages on different devices to ensure compatibility.

(Note: You can find a deeper explanation of mobile optimization in the links above.)

5. Optimize Your XML Sitemaps and Keep Them Updated

Your XML sitemap is a great tool to tell Google which pages to prioritize, help Googlebot find new pages faster, and create a structured way to provide Hreflang metadata.

To begin with, clean all unnecessary and broken pages from the sitemap. For example, you don’t want paginated pages on your sitemap, as in most cases, you don’t want them to rank higher on Google.

Also, including redirected or broken pages will signal to Google that your sitemap isn’t optimized and could make Googlebot ignore your sitemap altogether because it can see it as an unreliable resource.

You only want to include pages that you want Google to find and rank on the SERPs – including category pages – and leave out pages with no SEO value. This will improve crawlability and make indexation much faster.

On the other hand, enterprise websites tend to have several localized versions of their pages. If that’s your case, you can use the following structure to specify all your Hreflang tags from within the sitemap:

| <url> <loc>https://prerender.io/react/</loc> <xhtml:link rel=”alternate” hreflang=”de” href=”https://prerender.io/deutsch/react/”/> <xhtml:link rel=”alternate” hreflang=”de-ch” href=”https://prerender.io/schweiz-deutsch/react/”/> <xhtml:link rel=”alternate” hreflang=”en” href=”https://prerender.io/react/”/></url> |

This system will help you avoid common Hreflang issues that can truly harm your organic performance.

6. Set Your Robot Directives Correctly and Robot.txt File

When traffic drops, one of the most common issues we’ve encountered are unoptimized Robot.txt files blocking resources, pages, or entire folders or with on-page robot directives.

Fix robot.txt directives

After website migrations, redesigns, or site architecture changes, developers and web admins commonly miss pages with NoFollow and NoIndex directives on the head section. When you have a NoFollow meta tag on your page, you’re telling Google not to follow any of the links it finds within the page. For example, if this page is the only one linking to subtopic pages, the subtopic pages won’t be discovered by Google.

NoIndex tags, on the other hand, tells Google to leave the page out of their index, so even if crawlers discover the page, they won’t add it to Google’s index. Changing these directives to Follow, Index tags are a quick way to fix indexation problems and gain more real estate on the SERPs.

Optimize your Robot.txt Files

Your Robot.txt file is the central place to control what bots and user agents can access. This makes it a powerful tool to avoid wasting crawl budget on pages you don’t want ranking on the SERP or not even indexed at all. It’s also more efficient for blocking entire directories. But that’s also the main problem.

Because you can block entire directories without proper planning, you can block crucial pages that break your site architecture and block important pages from being crawled. For example, if you block pages that link to large amounts of content, Google won’t be able to discover those links, thus creating indexation issues and harming your site’s organic traffic.

It’s also common for some webmasters to block resources like CSS and JavaScript files to increase crawl budget. Still, without them, there’s no way for search engines to properly render your pages, hurting your site’s page experience or having a large number of pages tagged as thin content.

In many cases, large websites can also benefit from blocking unnecessary pages from crawlers, especially to optimize crawl budget.

(Note: It’s also a best practice to add your sitemap on your Robot.txt file because bots will always check this file before crawling the website.)

We’ve created an entire Robot.txt file optimization guide you can consult for a better implementation.

Optimizing meta descriptions and titles are usually some of the first tasks technical SEOs tend to focus on, but in most cases is due to how easy of a fix it is, not because it’s the most impactful.

Improve Your Enterprise Site’s Technical SEO for Improved Visibility

These six tasks will create a solid foundation for your SEO campaign/s and increase your chances of ranking higher. Each task will solve many technical SEO issues at once and allow your content to shine!

To help you improve your site’s indexing speed and performance, adopt Prerender.io. We ensure all your content and its SEO elements are easily found by search engine crawlers and users. No more incorrect content showing up on SERPs, or your product hidden from AI searches. Try Prerender.io today!

Looking for more technical SEO optimization tips to get more traffic from Google and AI search platforms? These guides can help: